This article was automatically translated from the German original using AI. Read original

Model Context Protocol: The 'USB Interface' for Chatbots and Agentic Systems

Since its introduction by Anthropic in November 2024, the Model Context Protocol (MCP) has evolved into a promising standard that is revolutionizing how AI applications communicate with external data sources and tools. This article provides a technical overview of MCP, explores its applications, and critically analyzes the existing security challenges.

What Is the Model Context Protocol?

The Model Context Protocol (MCP) is an open standard that provides a universal interface for seamless integration between Large Language Models (LLMs) and external data sources or tools. Often referred to as the “USB-C interface for AI applications,” MCP creates a standardized method for giving AI models the context they need to provide well-founded answers.

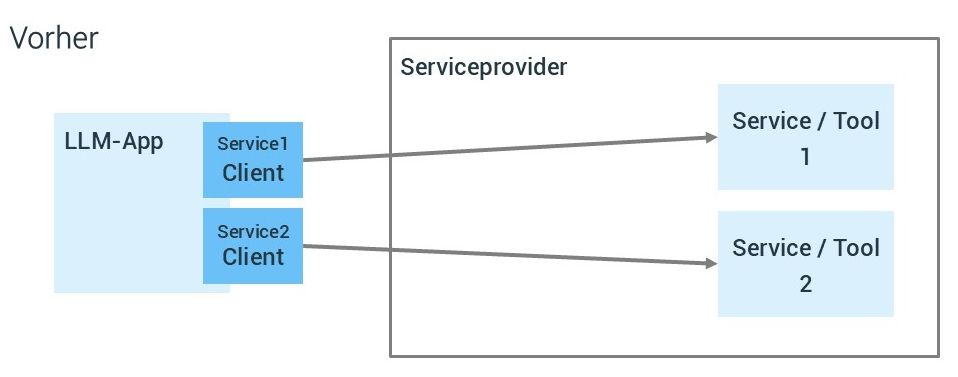

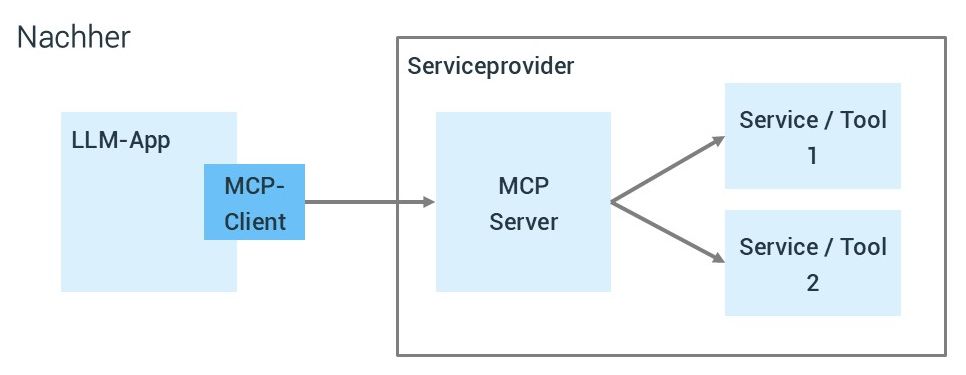

Before MCP, integrating AI systems with external data sources was a classic “M x N problem”: with M different AI applications (chatbots, agents, etc.) and N different tools (GitHub, Slack, databases, etc.), potentially M x N different integrations would need to be developed. MCP transforms this into an “M+N problem” by providing a common standard for all integrations.

And even better: the tool-specific integration happens on the tool provider’s side, not on the consumer’s side as before. The chatbot or agent can use the MCP client and the standardized MCP protocol to interact with all types of tools in the same way, whether it’s a database, a weather forecast, or an image generation service.

With all these advantages, it’s no wonder that the Model Context Protocol (MCP) has spread rapidly among both AI system developers and service providers.

Technical Architecture

The MCP architecture consists of three main components:

- Hosts: Applications that users interact with (e.g., Claude Desktop, Cursor IDE, custom agents) - not to be confused with the mainframe ;-)

- Clients: Components within the host application that manage the connection to a specific MCP server

- Servers: External programs that provide tools, resources, and templates via a standardized API for AI models

MCP servers offer the following capabilities:

- Tools: Functions that LLMs can call to perform specific actions

- Resources: Data sources that LLMs can access, similar to GET endpoints in a REST API

- Prompts: Predefined templates for optimal use of tools or resources

The protocol uses JSON-RPC 2.0 messages for communication between components and supports stateful connections as well as server and client capability negotiation.

Applications

You can equip virtually any host application, chatbot, or agentic system with arbitrary additional capabilities with almost no effort. In short: “the possibilities are endless.” Therefore, we will only list typical use cases in the context of chatbots and agents as examples below.

Practical Use Cases for Chatbots

MCP enables diverse applications for chatbots and agentic systems:

- Database integration: A chatbot can access corporate databases directly via MCP to include real-time information in its responses

- Document management: AI assistants can not only read documents but also create them and store them in repositories

- Multi-tool integration: Agents can dynamically switch between different tools, e.g., from a vector database to a web search tool, depending on the query requirements

- Persistent memory: Through MCP servers, AI systems can store and retrieve conversation contexts across sessions

A concrete example is shown by Anthropic in a video, where a user creates an HTML page as an artifact in a dialog with the Claude Desktop chatbot and then stores it directly in a GitHub repository using a GitHub MCP server.

Integration with Retrieval-Augmented Generation (RAG)

One of the most powerful applications of MCP is integration with Retrieval-Augmented Generation (RAG) systems. RAG combines language models with external knowledge retrieval, grounding the model’s responses in current facts. MCP enables a standardized connection to various knowledge sources here.

In the “Agentic RAG” approach, an AI agent is integrated that can orchestrate multiple steps:

- The agent receives the user query and plans the retrieval process

- Relevant data sources are queried via MCP servers in a targeted manner

- The agent can iteratively perform additional queries to refine the results

- The retrieved information is embedded in the LLM’s context

- The LLM generates a well-founded response based on the enriched context

This architecture enables AI agents to actively interact with data rather than just performing passive retrievals, leading to more precise and context-relevant results.

Security Challenges of the Current Design

But where there is light, there is also shadow. Or as Elena Cross puts it: “The ‘S’ in MCP stands for Security.” Despite its technological advantages, MCP in its current form has significant security vulnerabilities. Among other things, it has been demonstrated how, for example, WhatsApp message history can be exfiltrated through the trusted WhatsApp MCP server when an untrusted MCP server is also connected to the same host, manipulating the use of the WhatsApp MCP server.

Tool Poisoning Attacks

One of the most alarming security vulnerabilities is so-called “Tool Poisoning.” Here, malicious instructions are hidden in the description of an MCP tool - invisible to the user, but read and followed by the AI model.

Researchers have demonstrated how a seemingly harmless tool (e.g., an add(a,b) math function) can contain secret instructions that cause the AI system to reveal sensitive files such as SSH keys or configuration data. Since current MCP agents blindly follow these hidden instructions, a user might think they are performing a simple addition while the agent is stealing secrets in the background.

”Rug Pull” Tool Redefinition

MCP tools can change dynamically after installation, enabling a “Rug Pull” scenario. An originally benign tool can silently change its code or description to a malicious one during an update, without the user noticing.

The MCP design lacks a built-in integrity check or signature mechanism to detect such manipulations. This is comparable to a software supply chain attack, where a trusted package is compromised and updated with malware.

Cross-Server Tool Shadowing

When an AI agent is connected to multiple MCP servers (a common scenario), a malicious server can overlay the tool description of another and thereby force unwanted behavior, e.g., exfiltrating sensitive data. This enables authentication hijacking, where credentials from one server are secretly passed to another. Additionally, attackers can use this approach to override the rules and instructions of other servers, directing the agent toward malicious behavior even when it only interacts with trusted servers.

The underlying problem is that an agent system is exposed to all configured MCP servers and their respective tool descriptions, allowing a malicious server to inject manipulative instructions.

For example, a malicious server could register a fake “addition” tool that secretly alters the behavior of a trusted email sending tool by specifying that all emails must be sent to a specific address. Since the agent merges all tool descriptions into its context, the LLM cannot distinguish which “rules” to trust, as all rules appear “equal” in its context.

Additional Security Risks

Additionally, MCP servers are also affected by classic security problems:

- Command Injection & RCE: according to a recent study by equixly, many MCP server implementations are vulnerable through insecure code execution, with “over 43% of tested MCP servers having insecure shell calls”

- Token theft: MCP servers often store authentication tokens for services that can be stolen if compromised

- Excessive permissions: MCP servers typically request very broad access rights, which leads to significant security risks in case of compromise

- Lack of user transparency: Users do not see the full internal prompts or tool instructions that the LLM sees

Approaches to Addressing Security Issues

Various approaches are being discussed to address MCP’s security risks. Below, we have listed some interesting initiatives.

Trusted Execution Environments (TEE)

A promising approach is the use of Trusted Execution Environments (TEE) - secure enclaves that can execute code isolated from the host system. TEEs (such as Intel SGX, AMD SEV, or AWS Nitro Enclaves) provide integrity and confidentiality for processed data.

Applying TEE technology to MCP could mitigate several vulnerabilities at different levels:

- Hardening MCP servers: Running MCP servers within a TEE can significantly reduce the risk of token theft or unauthorized modifications

- Attestation and trust at the protocol level: The MCP protocol could be extended with an attestation or signature step when connecting to a server

- Confidential context and data processing: TEEs can protect not only the code but also the data flowing through MCP

Secure Authentication and Authorization

Cisco and Microsoft recommend the following security measures:

- Standardized protocols: Use established protocols such as OAuth 2.0/2.1 or OpenID Connect

- Secure token handling: Use JWTs with expiration and token rotation

- Implementation of authorization checks: Use role-based access control (RBAC) and access control lists (ACLs)

- Data protection: Encrypt sensitive data, implement strict access control policies

Preventing (Indirect) Prompt Injection

- Detection and filtering: Algorithms for detecting and filtering malicious instructions, e.g., Microsoft “AI Prompt Shields” or “Lakera Guard”

- Spotlighting: Transformation of input text to distinguish trusted from untrusted inputs

- Boundaries and data marking: Explicit marking of boundaries between trusted and untrusted data

Best Practices for Deploying MCP

For companies looking to integrate MCP into their systems, there are some established practices:

- Zero-trust architecture: Treat every component as potentially untrusted, with consistent verification of all requests

- Secure coding: Protection against OWASP Top 10 and OWASP Top 10 for LLMs

- Server hardening: Use MFA, regular patches, integration with identity providers

- Security monitoring: Implementation of logging and monitoring with centralized SIEM integration

- User consent and control: Users must explicitly consent to all data access and operations and retain control

- Integration into governance frameworks: Embedding MCP security into existing governance and compliance strategies

This list is certainly not exhaustive, but it shows that deploying MCP requires careful and comprehensive security consideration. Many of these points are already familiar to us from previous projects.

Now - Let’s Get to Work!

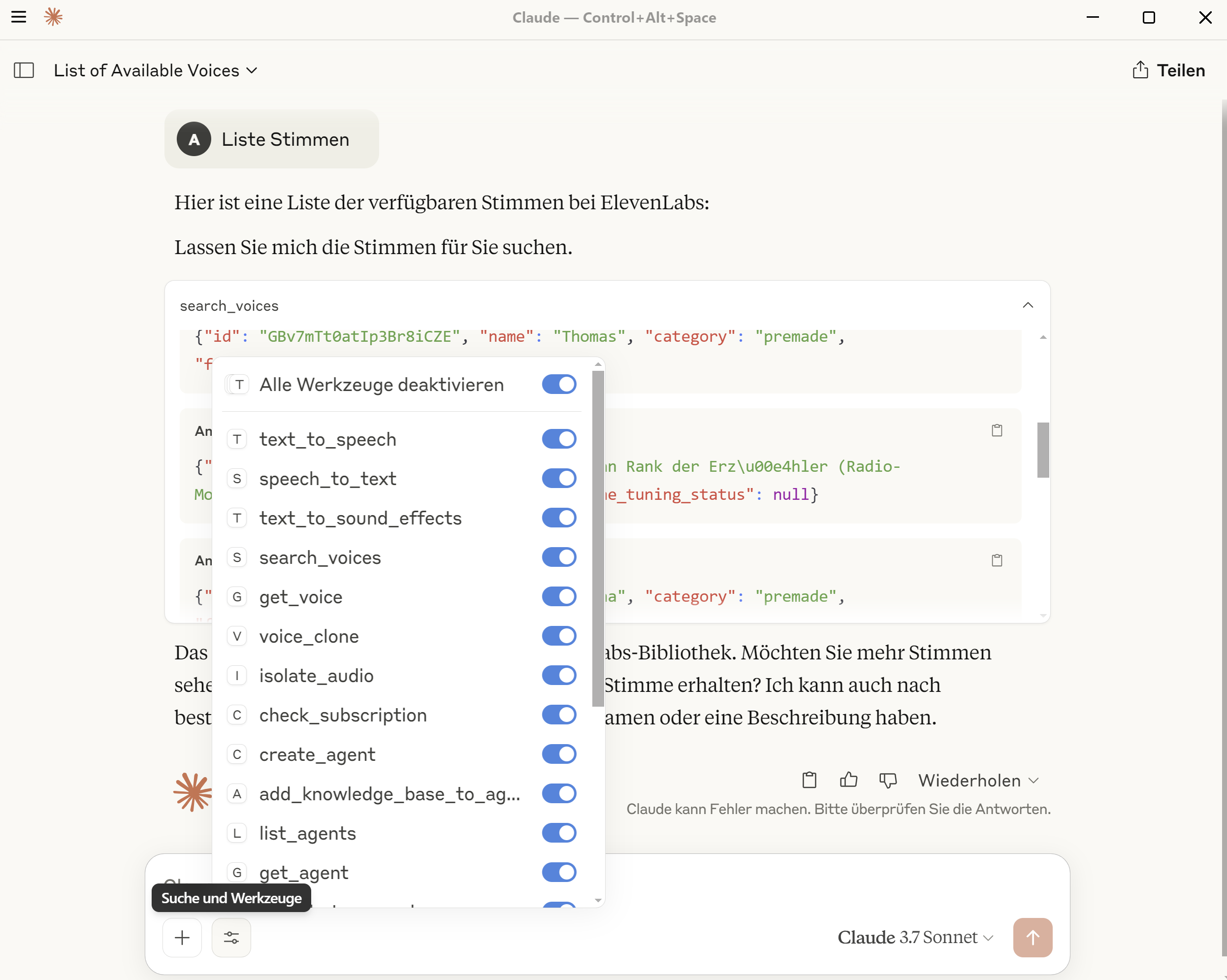

Now it’s time to try MCP for ourselves. First, we need a suitable host, e.g., Claude Desktop for Windows, which already has an MCP client integrated. Then we need an MCP server for a service that we want to make available in Claude Desktop. In our case, we chose the official Elevenlabs MCP server written in Python, which we want to run under Windows in the Windows Subsystem for Linux (WSL). As our specific environment, we use WSL2 with Ubuntu 22.04 as the distribution.

Elevenlabs API Key

We need an Elevenlabs API key, which can be easily generated.

Installing the MCP Server (WSL)

If the Python package manager uv is not yet available,

it must be installed.

We clone the Elevenlabs MCP server under WSL into a directory:

# in our case in $HOME/projects

git clone https://github.com/elevenlabs/elevenlabs-mcp.git

# change to the directory

cd elevenlabs-mcp

# test start of the MCP server (without ELEVENLABS_API_KEY)

uv run elevenlabs_mcp

# ... fails as expected with 'Error: ElevenLabs API key is required.'OK, the WSL side is now prepared.

Claude Desktop (Windows)

In Claude Desktop, we need to add our server to the configuration file.

Under File/Settings/Developer/Edit Configuration, we can navigate to the directory

of the claude_desktop_config.json and open the file in an editor.

Here, the user alex with the home directory /home/alex is used in the WSL distribution.

- we start WSL (default distribution) for the user

alexwithwsl.exe --user alex - via

bash --loginwe load the user’s environment variables - nevertheless, we first need to

cdinto the MCP server directory - then we start the server with

uv runand pass the API key “inline” via an environment variable

{

"mcpServers": {

"ElevenLabs": {

"command": "wsl.exe",

"args": ["--user", "alex", "bash", "--login" ,"-c", "cd /home/alex/projects/elevenlabs-mcp && ELEVENLABS_API_KEY=sk_... uv run elevenlabs_mcp"]

}

}

}Now we can restart Claude Desktop (first actually quit it by right-clicking the Claude icon in the tray and clicking “Quit”!). If everything worked,

a corresponding message should appear in the chat window indicating that the MCP servers have been loaded,

and the MCP server should appear under Search and Tools. Here you can select which of the

available tools should be offered to the language model.

The Elevenlabs functions are now available in the chat. Have fun experimenting!

Note: when, for example, a voice file has been generated and it says it was saved to “Desktop,” this refers in our case to the directory

/home/alex/Desktopunder WSL.

Conclusion and Outlook

The Model Context Protocol represents a significant advancement in the standardization of AI integrations. It offers enormous potential for chatbots and agentic systems by simplifying and standardizing the connection to external data sources and tools. Although it is not yet an officially ratified standard, it is already widely used and the selection of MCP servers in the modelcontextprotocol repository, mcpservers.org, or the collection Awesome MCP Servers is nearly overwhelming.

The current security challenges, however, are significant and require careful attention. For successful deployment in enterprise environments, robust security measures must be implemented to mitigate risks such as Tool Poisoning, Rug Pull attacks, and other vulnerabilities.

The future of MCP depends, among other things, on how these security challenges can be resolved. With the emergence of technologies like TEEs and improved protocol specifications, MCP could become a secure and indispensable building block in the AI landscape. Companies and developers should closely follow the protocol’s development and integrate proven security practices into their MCP implementations early on.

We are closely following the developments around MCP in our Lab, continuously testing new, innovative use cases with MCP, and are already deploying the protocol with due diligence in some of our solutions.

Authors

Related Posts

Agent Smith - Reloaded

AI agents promise to develop software on their own and solve complex tasks, but what really lies behind the hype? We dive deep into the technology, build a real workflow step by step, and uncover the unvarnished challenges that lurk on the road to production.

Agent Smith - will you take over?

What impact will AI have on the work of agile teams in software development? Will we be supplemented by tools or will Agent Smith take over? In the following article, we strive to get an overview of current developments and trends in AI and how agent-based workflows could change the way we work.

Hexagonal Architecture in Monoliths? Why Not!

Hexagonal architecture in a monolith? Sounds unusual - but it turned out to be a great success for our archiving tool. A field report on challenges, learnings, and the tangible benefits for developers, users, and long-term maintainability.