LangChain: Chat with your Documents!

In a recent article we introduced LangChain, which is a powerful open-source framework for developing applications powered by language models (LLMs). In this article we will approach a more complex example and show you, how to build a chatbot that understands your documents.

Problem statement

The main problem we need to solve is that the language model is not aware of the content of our documents, so if we ask a question about our documents, the language model will not be able to give us a meaningful answer.

Addressing this problem entails developing a mechanism to integrate the content of our documents into the language model’s knowledge and reasoning processes. This integration should enable the model to effectively comprehend and utilize the document content, thereby empowering it to deliver pertinent, insightful, and contextually appropriate responses to queries associated with these documents.

Let’s look at some possible solutions.

The simplest solution: provide the document as context along with the prompt

We can simply pass the text of out document as context along with the prompt to the language model. This is the simplest solution of course, but it has some drawbacks:

- each LLM has a token limit, i.e. the maximum number of tokens it can process at once (4k for GPT-3), so we are very limited how much context we can provide

- even though we can circumvent this limit by choosing a model with a 16k token limit, it must be considered that the (large) context is sent with each request, and you are charged per token.

If you consider these drawbacks acceptable, you could give this approach a try.

The costly solution: finetune the language model on your documents

Fine-tuning the language model with a carefully curated dataset that includes your documents can yield remarkable performance improvements. This process tailors the model by creating a new version based on an existing one, enabling it to comprehend the specific domain and language utilized in your documents, resulting in more relevant and contextually appropriate responses. To equip a large language model for insightful and coherent conversations about your documents, it is imperative to address the challenges of fine-tuning, deploying, and operating the model effectively and efficiently.

In cases where you have a large dataset of documents, this approach can be very effective. However, it is important to note that this technique is very costly as it requires a lot of compute power and time to finetune a language model.

OpenAI also offers the possibility for finetuning their LLMs, which is a very interesting approach, if your document is not sensitive, and you can share it with OpenAI.

We will not go into detail here, but keep this in our backlog for a future article.

The smart solution: Retrieval-Augmented Generation (RAG)

Let’s first look at the definition of Retrieval-Augmented Generation:

Retrieval-Augmented Generation is a sophisticated technique in natural language processing (NLP) that combines information retrieval and generative language models. It leverages a pre-existing dataset or knowledge base to retrieve relevant information, which is then integrated into the generation process. This approach enhances the quality and relevance of the generated text, enabling the model to produce more contextually accurate and informative responses. By seamlessly fusing retrieval and generation, it empowers NLP systems to excel in tasks such as question answering, content creation, and chatbots, where contextual understanding and factual accuracy are paramount.

In simple words: Retrieval-Augmented Generation is a method to generate better and more accurate sentences or answers by using a retrieval system, that gets relevant information (“semantic search”) and passes it to the language model, which then generates the final answer based on the prompt and the retrieved information. It’s an elegant way to make computer-generated text smarter and more useful without having to pass full documents and information to the language model.

With a powerful framework like LangChain at your disposal, building a chatbot using an existing LLM becomes a seamless process. By harnessing the provided abstractions for embeddings, storage and retrieval with vector databases and conversational chains, you can easily build a chatbot that understands your documents. The LangChain-implementations ensure that only pertinent context is provided to the LLM, effectively addressing our challenge of engaging in meaningful conversations about your documents.

Let’s see how this works in detail.

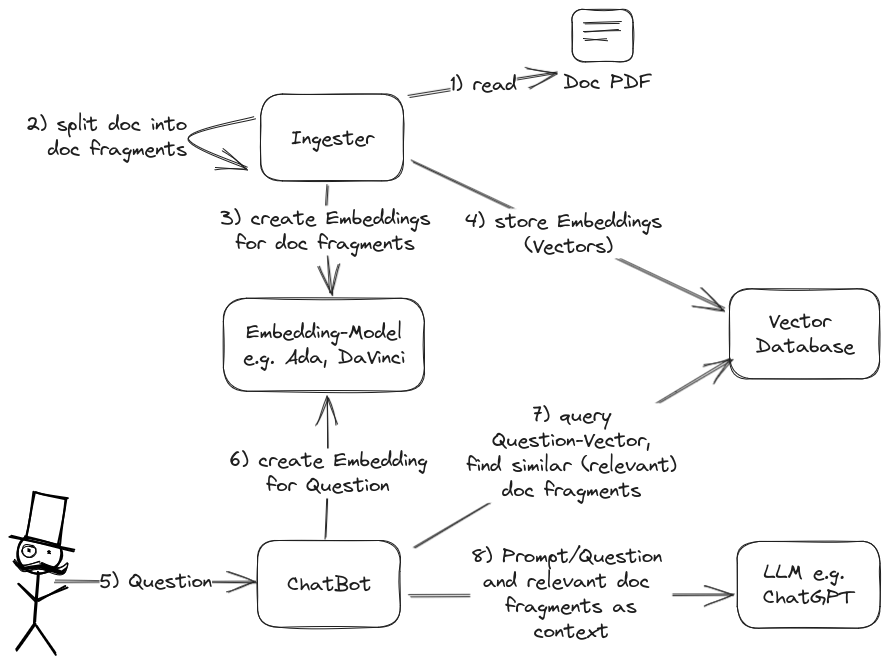

Retrieval-Augmented Generation (RAG) Overview

To be able to chat with our documents we need some preparation. We create an Ingester that reads our documents, splits them in reasonable chunks/fragments and creates embeddings for them. These embeddings are stored in a vector database. Later, when we chat about our documents and ask a question, we can create an embedding for our question and query the vector database for the most similar (= relevant) chunks of text from our documents. These chunks are then passed to the LLM as context along with the prompt. The LLM then has the question and (hopefully) enough relevant context to generate a meaningful response, which is then passed back to the delighted user.

Creating embeddings for chunks of text from our documents and storing them in a vector database for later lookup facilitates efficient and faster retrieval of relevant information during conversations. This approach helps us to access relevant fragments of your documents quickly and efficiently, which we then pass to the LLM resulting in more contextually appropriate responses.

Embeddings

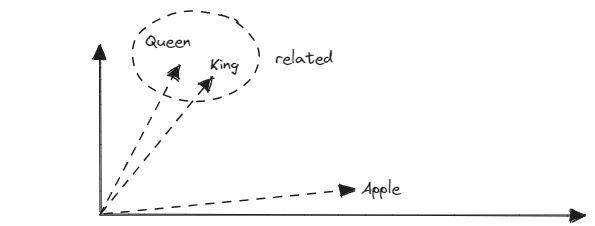

But hold on, what are embeddings? Embeddings are a way to represent words, phrases, or even entire documents as numerical vectors in a multi-dimensional space. The OpenAI Embedding model “text-embedding-ada-002” for example creates vectors with 1536 dimensions. These vectors are designed in such a way that they capture meaningful relationships and similarities between the chunks of text they represent.

Imagine you have a dictionary, and you want to represent each word in that dictionary as a unique set of numbers. With good embeddings, words that are similar in meaning or context would have similar numerical representations, and words that are dissimilar would have dissimilar representations.

For example, in a well-designed word embedding space, the vectors for “king” and “queen” might be very close to each other because they are related in meaning and context, whereas the vector for “king” would be far from the vector for “apple” because they are unrelated.

These numerical representations are extremely useful in various natural language processing tasks like machine translation, sentiment analysis, and document similarity, as they allow computers to understand and work with textual data in a more structured and meaningful way.

We utilize embeddings and the similarity-retrieval mechanisms of vector databases for our chatbot to perform semantic search. This allows us to identify relevant pieces of information pertaining to the current question more effectively.

Challenge: Token Limit

This approach is no silver bullet and there are some challenges we need to address: the token limit is still a thing, even if we pass smaller chunks of text (i.e. 1000 characters) to the LLM. We have to plan the size and the number of the fragments, so that everything fits into the token limit, which also comprises chat history and the question itself.

LangChain implements several strategies to address this problem in its DocumentChains:

- “stuff”: stuff everything in “as is”

- “refine”: pass one document/fragment at a time to the LLM and refine the result with the next document/fragment

- “map reduce”: extract the essence of every single document (regarding the question), combine the results and pass the combined result as context along with the question to the LLM for the final answer

- “map rerank”: try to answer the question with every document/fragment individually and rank the answers, select the highest rated answer and pass it back to the user as final answer

Using this solution is a smart way to be able to “chat with your docs” and address the token limit and the cost problem. With the help of LangChain it is also rather easy to implement.

Let’s write some code.

Implementation

To implement the chat with the documents with RAG, we need a handful of components:

- a PDF parser for the documents to extract the text (image-based PDFs will not work)

- a component to split the documents into fragments or chunks

- an embeddings provider to create embeddings for the fragments and the questions

- a vector database to store and retrieve the embeddings and document-chunks

- an LLM to generate the answers to the questions and the given document-chunks

We use the powerful LangChain abstractions, which already provide us with access to the required components and make our implementation much easier and more concise. To facilitate the implementation of a UI in the next blog article, we wrap the functionality into a class that provides appropriate methods for the chat.

We decided to use OpenAI for Embeddings and Chat and Chroma for the vector database. LangChain contains implementations for a variety of LLMs and vector databases, so you can choose the one that fits your needs best.

The ConversationalRetrievalChain does the main work for us: it is based on the RetrievalQAChain

which takes care of the retrieval and the generation of the answer and adds the possibility to

provide the chat history of the conversation. We just need to provide the LLM, the embedding-provider

and the vector database.

We implement the class in a file called docchat.py:

import os

import sys

import openai

from langchain.text_splitter import RecursiveCharacterTextSplitter

from langchain.chains import ConversationalRetrievalChain

from langchain.document_loaders import PyPDFLoader

# LLM and embeddings

from langchain.chat_models import ChatOpenAI

from langchain.embeddings.openai import OpenAIEmbeddings

# Alternative LLM and embeddings: GPT4All

# from langchain.llms import GPT4All

# from langchain.embeddings import GPT4AllEmbeddings

# VectorDatabase - store & retrieve embeddings

from langchain.vectorstores import Chroma

# Alternative if you only have small docs: DocArrayInMemorySearch instead of Chroma, see DocChat._load_and_process_document

# from langchain.vectorstores import DocArrayInMemorySearch

openai.api_key = os.environ['OPENAI_API_KEY']

llm_name = "gpt-3.5-turbo"

# Use OpenAI for chat completion

llm = ChatOpenAI(model_name=llm_name, temperature=0)

# Use OpenAI to create embeddings for chunks and questions

embeddings = OpenAIEmbeddings()

# Alternative GPT4All, model must point to the model-file you want to use

# llm = GPT4All(model="/home/alex/.local/share/nomic.ai/GPT4All/wizardlm-13b-v1.1-superhot-8k.ggmlv3.q4_0.bin", n_threads=8)

# embeddings = GPT4AllEmbeddings()

# create Vector Database to insert/query Embeddings - we currently do not persist the embeddings on disk

vectordb = Chroma(embedding_function=embeddings)

class Document:

"""

Document class to store document name and filepath

"""

def __init__(self, name: str, filepath: str):

self.name = name

self.filepath = filepath

class ChatResponse:

"""

ChatResponse class to store the response from the chatbot.

"""

answer: str = None

db_question: str = None

db_source_chunks: list = None

def __init__(self, answer: str, db_question: str = None, db_source_chunks: list = None):

self.answer = answer

self.db_question = db_question

self.db_source_chunks = db_source_chunks

def __str__(self) -> str:

return f"{self.db_question}: {self.answer} with {self.db_source_chunks}"

class DocChat:

"""

DocChat class to store the info for the current document and provide methods for chat.

"""

_qa: ConversationalRetrievalChain = None

# string list of chat history

_chat_history: list[(str, str)] = []

# Holds current document, we can chat about

doc: Document = None

def process_doc(self, doc: Document) -> int:

"""

Process the document (split, create & store embeddings) and create the chatbot qa-chain.

:param doc:

:return: number of embeddings created

"""

self.doc = doc

(self._qa, embeddings_count) = self._load_and_process_document(doc.filepath, chain_type="stuff", k=3)

# Clear chat-history, so prompts and responses for previous documents are not considered as context

self.clear_history()

return embeddings_count

@staticmethod

def _load_and_process_document(file: str, chain_type: str, k: int) -> (ConversationalRetrievalChain, int):

loader = PyPDFLoader(file)

documents = loader.load()

# Split document in chunks of 1000 chars with 150 chars overlap - adjust to your needs

text_splitter = RecursiveCharacterTextSplitter(chunk_size=1000, chunk_overlap=150)

docs = text_splitter.split_documents(documents)

# Make sure that we forget the embeddings of previous documents

db = Chroma()

db.delete_collection()

# Fill Vector Database with embeddings for current document

db = Chroma.from_documents(docs, embeddings)

# Alternative: see https://python.langchain.com/docs/integrations/vectorstores/docarray_in_memory

# db = DocArrayInMemorySearch.from_documents(docs, embeddings)

# Create Retriever, return `k` most similar chunks for each question

retriever = db.as_retriever(search_type="similarity", search_kwargs={"k": k})

# create a chatbot chain, conversation-memory is managed externally

qa = ConversationalRetrievalChain.from_llm(

llm=llm,

chain_type=chain_type,

retriever=retriever,

return_source_documents=True,

return_generated_question=True,

verbose=True,

)

return qa, len(docs)

def get_response(self, query) -> ChatResponse:

if not query:

return ChatResponse(answer="Please enter a question")

if not self._qa:

return ChatResponse(answer="Please upload a document first")

# Let LLM generate the response and store it in the chat-history

result = self._qa({"question": query, "chat_history": self._chat_history})

answer = result["answer"]

self._chat_history.extend([(query, answer)])

return ChatResponse(answer, result["generated_question"], result["source_documents"])

def clear_history(self):

self._chat_history = []

return

# main method for testing only - please note: embeddings are not persisted

if __name__ == "__main__":

if len(sys.argv) != 3:

print("Usage: python docchat.py <file_path> <question>")

sys.exit(1)

file_path = sys.argv[1]

question = sys.argv[2]

doc_chat = DocChat()

file_name = os.path.basename(file_path)

embeddings_count = doc_chat.process_doc(Document(name=file_name, filepath=file_path))

print(f"Created {embeddings_count} embeddings")

response = doc_chat.get_response(question)

print(response.answer)

sources = [x.metadata for x in response.db_source_chunks]

print(sorted(sources, key=lambda s: s['page']))In the last few lines, we implemented a main method to test our DocChat. We create a DocChat instance and process the document we want to chat about. Then we ask two questions and just print the answers.

Depending on the PDF you choose, you will get different answers, but the answers should be meaningful and contextually appropriate.

We use pipenv for the management of virtual env and dependency management, you can easily install

it with pip install pipenv. We specify the requirements in a Pipfile and use pipenv install

to create a virtual environment and install the dependencies in one step.

This would be our Pipfile with the dependencies:

[[source]]

url = "https://pypi.org/simple"

verify_ssl = true

name = "pypi"

[packages]

langchain = {version = "==v0.0.308", extras = ["llms"]}

chromadb = "~=0.4.13"

pypdf = "~=3.16.2"

tiktoken = "~=0.5.1"

unstructured = "~=0.10.19"

# If you want to try GPT4All, see below

# gpt4all = "==1.0.12"

[requires]

python_version = "3.10"To run our class, we need to install the requirements and set the OpenAI API key. Then we are ready to run our code.

As an example, I’ve obtained the transcript of Andrew NG’s CS229 Machine Learning Course in PDF format and saved it in a “/docs” folder in the current directory. However, feel free to use any PDF of your choice, provided that it contains text and does not consist of images only.

# Install Dependencies

pipenv installYou can launch a subshell with the virtual environment with pipenv shell and

run your python interpreter in this shell:

export OPENAI_API_KEY=<YOUR_API_KEY>

# start subshell in venv

pipenv shell

# |-- place path to your doc here --| |-- your question --|

python docchat.py "docs/MachineLearning-Lecture01.pdf" "What is CS229 all about?"Alternatively you can use pipenv run to execute your python interpreter in

the virtual environment:

export OPENAI_API_KEY=<YOUR_API_KEY>

# |-- place path to your doc here --| |-- your question --|

pipenv run python docchat.py "docs/MachineLearning-Lecture01.pdf" "What is CS229 all about?"Either way, the output should read like this:

CS229 is a machine learning class taught by Andrew Ng. It covers the technical content of machine learning,

including math and equations. The class assumes that students have a basic understanding of linear algebra

and matrix operations. Homework assignments and solutions are posted online, and there is a newsgroup for

class discussions. The class is televised and can be watched at home.Please be aware the embeddings for your document are not persisted to disk. Therefore, each time you restart the application, you’ll need to recreate them, which may incur costs based on the document’s size and the number of segments it’s split into. The possibility to use our class via commandline is solely for a quick test of our base class for our chatbot - we will build a web-ui using this class in our next article. Depending on your use-case, it’s advisable to save and reuse the embeddings for efficiency and cost optimization. You can easily implement this functionality depending on your needs - you can store all documents in one single vector database and build a chatbot that can chat about all of them. Or you decide to store the embeddings for each document in a separate collection within one vector database or even in a separate vector database, so that you can control the scope of your chat.

Optional: GPT4All for Embeddings and Chat

As we have mentioned in our previous post about GPT4All, we can use GPT4All models locally

to create embeddings and make use of powerful LLMs. We can use the same code as above, by just

installing the gpt4all package and altering a few lines (thanks to the LangChain abstractions)!

# -- comment lines using OpenAI

# llm = ChatOpenAI(model_name=llm_name, temperature=0)

# embeddings = OpenAIEmbeddings()

# ++ uncomment lines using GPT4All

from langchain.llms import GPT4All

from langchain.embeddings import GPT4AllEmbeddings

# you have to download a model and provide the path (here: Ubuntu, model downloaded with GPT4All Chat App - instead

# you can manually download Models at https://gpt4all.io/, scroll down to "Model Explorer")

llm = GPT4All(model="/home/alex/.local/share/nomic.ai/GPT4All/wizardlm-13b-v1.1-superhot-8k.ggmlv3.q4_0.bin", n_threads=8)

# the embedding model will be downloaded automatically

embeddings = GPT4AllEmbeddings()Please note that GPT4All 1.0.9 seems to have a bug, so you either have to stick to gpt4all = "==1.0.8"

or update to gpt4all = "==1.0.12" in your Pipfile.

If the performance of the GPT4All completion models does not suffice for your use case, you can mix and match and use OpenAI’s completion model and GPT4All for the Embeddings, effectively combining the strengths of both approaches.

Conclusion

In this article we built the foundation for a chatbot that understands your documents using Retrieval-Augmented Generation (RAG). We used the powerful LangChain-Abstractions to implement the base class for the chatbot in just a few lines.

In the next article we will explain how to build a simple but functional UI for our chatbot, so stay tuned!

Authors

Related Posts

Enhancing Your Document-ChatBot: Improving Retrieval-Augmented Generation for Superior Information Synthesis

Unlock advanced RAG capabilities using document hierarchies. Our guide explains how structuring data like a table of contents can significantly improve information retrieval, ensuring faster, more reliable, and contextually accurate AI-generated content with reduced errors.

Interactive Web-App with Streamlit: Web-UI for Chat-with-your-Documents

Using our chat class from the last post we now build a web-app with Streamlit for easy chat with your documents

LangSmith: Debug, Trace, Evaluate and Monitor LangChain-powered LLM-Apps

Streamlining the Journey from Language Model Prototypes to Production with LangSmith: An Overview