This article was automatically translated from the German original using AI. Read original

Postgres in Kubernetes - Automation with the Postgres Operator

“Persistence is hard” - as a Kubernetes administrator, you are well aware of this fact. Databases are a core component of many applications and must be operated reliably and securely. Support is always welcome, especially when you need to create and manage many instances. Automating databases in Kubernetes is a challenge that can be solved with operators. In this article, we demonstrate how this can be achieved using the “Crunchy Data Postgres Operator” as an example.

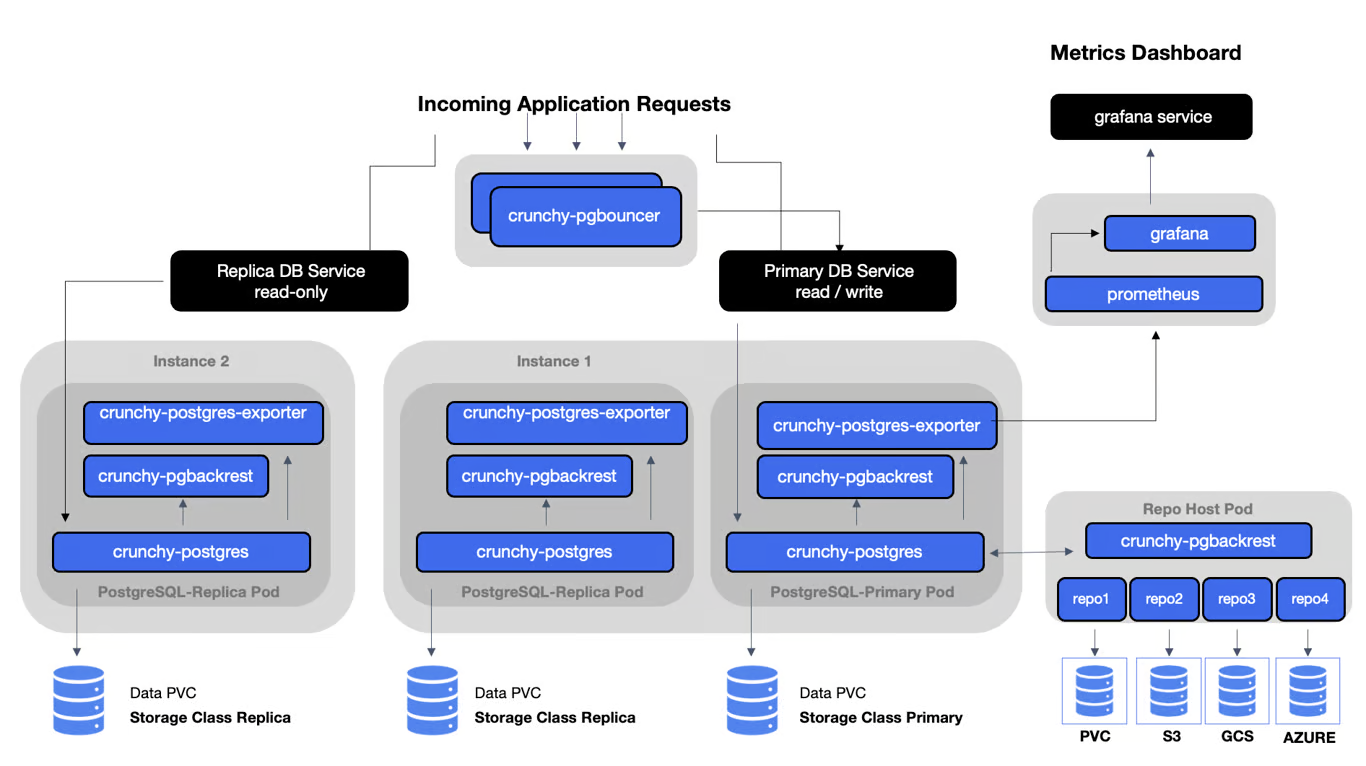

Architecture

The goal of the Crunchy Data Postgres Operator (PGO) is to provide a quick and straightforward way to run applications with PostgreSQL in both development and production environments. That sounds interesting :-)

To better understand how PGO accomplishes this, you should take a look at the operator’s architecture.

The Crunchy PostgreSQL Operator extends Kubernetes with a higher level of abstraction for easier creation and management of PostgreSQL clusters. It uses “Custom Resources” and defines special Custom Resource Definitions (CRDs) to control itself and the PostgreSQL clusters it creates.

PGO itself runs as a Kubernetes Deployment with a single container, the Operator. The Operator uses Kubernetes controllers that monitor all events on Kubernetes resources (Jobs, Pods) as well as on the Custom Resources (PostgresCluster, PGUpgrade), and reconciles the desired declared state.

PGO is very capable and can provision highly available clusters with multiple replicas, backup options (local & S3), as well as monitoring and connection pooling.

Installation

Getting started is easy thanks to the excellent documentation. The Quickstart Guide walks you through the installation of the Postgres Operator all the way to provisioning your first Postgres database.

The only prerequisite is a Kubernetes cluster that is reachable via kubectl.

The Operator is installed using Helm or kustomize. Only a few steps are required:

Clone the example repository with default configurations:

git clone https://github.com/CrunchyData/postgres-operator-examples.git

cd postgres-operator-examplesThe example repo contains various sample configurations for deploying the Operator and databases via Helm or Kustomize.

Installing the Operator in the “postgres-operator” namespace via Kustomize is done with:

kubectl apply -k kustomize/install/namespace

kubectl apply --server-side -k kustomize/install/default```The First Postgres Database

After installation, you can create your first Postgres database. This is done using

a Custom Resource Definition (CRD) that describes the database configuration. An example

for a simple database is included in the kustomize/postgres directory:

kubectl apply -k kustomize/postgresEssentially, only a Custom Resource is created that describes the database. The Postgres Operator ensures that the database instance is created and all necessary additional resources (backup pod, ConfigMaps, Secrets, Services, PVCs, …) are provisioned.

apiVersion: postgres-operator.crunchydata.com/v1beta1

kind: PostgresCluster

metadata:

name: hippo

namespace: mynamespace

spec:

postgresVersion: 16

users:

- name: rhino

databases:

- zoo

instances:

- name: instance1

dataVolumeClaimSpec:

accessModes:

- "ReadWriteOnce"

resources:

requests:

storage: 1Gi

backups:

pgbackrest:

repos:

- name: repo1

volume:

volumeClaimSpec:

accessModes:

- "ReadWriteOnce"

resources:

requests:

storage: 1GiTip

If you want to run the Operator with a backup repo, you need at least one additional node, since the repo container must by default run on a different node than the database instance. Alternatively, you can adjust the topology-spread-constraints for the backup repos accordingly.

Shortly after, we can already see the containers and Secrets in our namespace “mynamespace” and can start using our database.

Access!

To access the database, we start a container where we can connect to the database

using psql. We mount the Secret hippo-pguser-rhino created by the Operator for our database instance

and map all keys as environment variables, which we can then use for the psql command.

kubectl apply -f - <<EOF

apiVersion: v1

kind: Pod

metadata:

name: pgclient

namespace: mynamespace

spec:

containers:

- name: pgclient

image: postgres:latest

command: ["sleep", "infinity"]

envFrom:

- secretRef:

name: hippo-pguser-rhino

stdin: true

tty: true

EOFThen we connect to the container and start a shell:

kubectl exec -n mynamespace -it pgclient -- bashBecause we mounted the Secret entries as environment variables, we can conveniently access the database:

PGPASSWORD=$password psql -U $user -d $dbname -h $hostAnd off we go - now we can interact with our database instance.

bash

% List users

\du+

% List databases

\lIf these commands work, then an application can also access the database using the information from the Secret.

Using the Public Schema

Please note: for security reasons, Postgres has long recommended revoking permissions on the “public” schema. With Postgres 15, this recommendation became the default behavior. This change improves security but causes difficulties for those who connect as a user and immediately try to create tables.

To set up a schema for the user, the spec.databaseInitSQL field can be used

to create appropriate schemas via an init SQL script.

Alternatively, you can use the automatic user schema creation feature, which can simply be enabled via an annotation in the CRD. More information can be found in the User / Database Management Tutorial.

metadata:

name: hippo

annotations:

postgres-operator.crunchydata.com/autoCreateUserSchema: trueThis automatically creates a schema with the user’s name when the user is created,

which Postgres also prioritizes in the search_path, as the following statement shows:

SHOW search_path;

search_path

-----------------

"$user", publicConfiguration

Backup

Backup is enabled by default and uses pgBackRest. The configuration is described in the Backup Guide. The backup is performed with a separate container and can be configured in various ways. For example, you can define the number of backups and the retention period. Encryption of backups is also possible. Configuration is done via the Operator’s Custom Resource Definition (CRD).

For a long time, it was not possible to skip backups for, say, test databases.

Optional Backup

A relatively new feature in version 5.7 is the frequently requested “Optional Backup”. This allows databases to be excluded from backup. This is useful, for example, when a database only contains temporary data or should not be backed up, such as a development database. Configuration is also done via the Operator’s CRD. More information can be found in the Optional Backup Guide.

To run our already deployed example database (see above) without backup,

we remove the backup section from the spec and add an annotation

that allows the Operator to discard an existing backup.

If a database is newly created without backup, this annotation is not needed.

apiVersion: postgres-operator.crunchydata.com/v1beta1

kind: PostgresCluster

metadata:

name: hippo

annotations:

postgres-operator.crunchydata.com/authorizeBackupRemoval: true

spec:

postgresVersion: 16

users:

- name: rhino

databases:

- zoo

instances:

- name: instance1

dataVolumeClaimSpec:

accessModes:

- "ReadWriteOnce"

resources:

requests:

storage: 1GiDay Two Operations

Of course, operating a database involves much more than just creating and deleting databases. The Operator offers many features that simplify the daily operation of databases in Kubernetes.

These include:

- Monitoring

- Cluster management

- Scaling/clustering

- Configuration changes

- Version upgrades

- Migration

- …

CrunchyData has created tutorials for Day Two Operations and documented typical questions with numerous Guides. This demonstrates the true strength and maturity of the solution.

Kubernetes - Helm Deployment

In addition to installing the Postgres Operator via kustomize, CrunchyData also offers

a Helm chart. The chart is very flexible and provides many configuration options.

Installing the Operator with Helm is somewhat cumbersome, as the charts are no longer(?) available in a repository. Instead, the chart must be downloaded and included as a dependency in your own chart, for example. The default values are well suited for getting started and can be easily adjusted for specific requirements.

CrunchyData also provides a Helm chart for deploying database instances, which can be integrated into the deployments of applications that require Postgres databases.

Tip

When installing the Operator with Helm via ArgoCD, the error “Too long: must have at most 262144 bytes” may occur

when synchronizing Custom Resource Definitions (CRDs).

This is because Kubernetes has a 256 KB size limit for annotations.

By default, Argo CD uses Client-Side Apply, which adds the last-applied-configuration annotation,

and with extensive CRDs, this can cause the limit to be exceeded.

The recommended solution is to enable Server-Side Apply, which does not add this annotation.

In Argo CD version 2.5 and later, this can be achieved by setting the sync option ServerSideApply=true.

For earlier versions, kubectl replace can be used as an alternative, but with caution,

as it can lead to unexpected results when multiple clients modify an object.

This article describes the problem and solution very well.

Conclusion

The Crunchy Data Postgres Operator is a mature system for running Postgres databases in Kubernetes. Installation is straightforward, and configuration is done via Custom Resource Definitions (CRDs). The Operator offers many features that simplify operating databases in Kubernetes. The documentation is excellent and contains many examples and tutorials. The Operator is a great choice for automating Postgres databases in Kubernetes.

The Operator provides support for administering Postgres databases in Kubernetes and hides much of the complexity. Nevertheless, the stable operation of one (or many!) database(s) remains a challenge and requires a certain level of experience and knowledge.

And remember: no backup, no sympathy!

Authors

Related Posts

Agent Smith - Reloaded

AI agents promise to develop software on their own and solve complex tasks, but what really lies behind the hype? We dive deep into the technology, build a real workflow step by step, and uncover the unvarnished challenges that lurk on the road to production.

Hexagonal Architecture in Monoliths? Why Not!

Hexagonal architecture in a monolith? Sounds unusual - but it turned out to be a great success for our archiving tool. A field report on challenges, learnings, and the tangible benefits for developers, users, and long-term maintainability.

Model Context Protocol: The 'USB Interface' for Chatbots and Agentic Systems

With the Model Context Protocol (MCP), an open standard is emerging for integrating AI models with external tools. This article examines the structure, applications, and benefits of MCP - and shows how developers can use it to efficiently build modern, context-aware AI systems.