This article was automatically translated from the German original using AI. Read original

Hexagonal Architecture in Monoliths? Why Not!

The Project

Before we dive into implementations and architectures, I want to give a very brief, general outline of my project: I want to describe the business context, the starting situation, and the thoughts we put into it. From a business perspective, this is an archiving tool. Users needed to be able to scan paper documents that had accumulated over the past decades, verify their validity, add metadata, and then store them in an audit-proof archive system. Since this is a public-sector organization, stricter rules apply for ensuring correct storage and retrieval of data, as well as for timely deletion or anonymization of sensitive information. One challenge, however, was the various archives within the organization - structures that had grown over decades and could not be unified in one big bang. We therefore faced the challenge of providing a structured process that meets legal requirements while remaining flexible enough to accommodate the specifics of each department. The history of this application goes back several years, and various versions had been implemented and discarded shortly after. We wanted to counter this with our sustainable and extensible approach.

What Is Hexagonal Architecture?

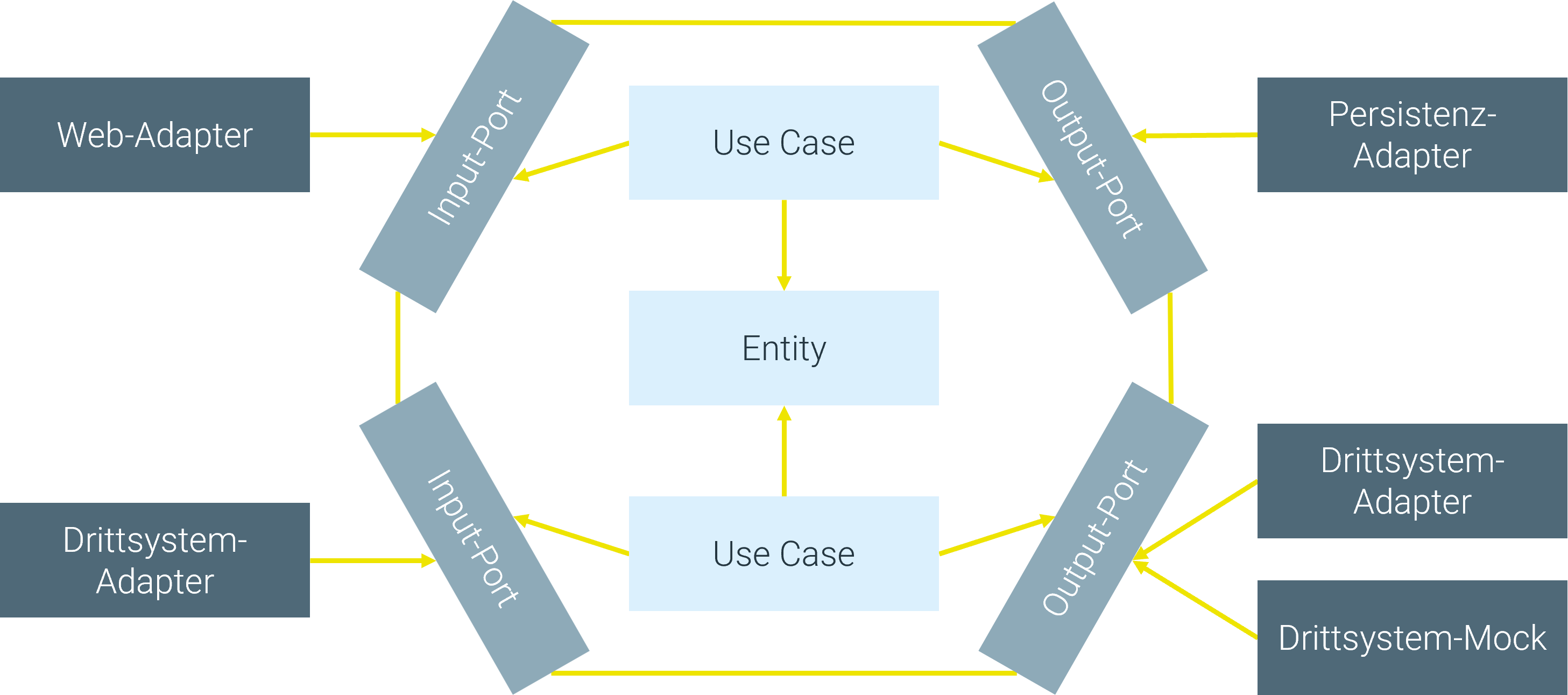

I want to give a rough overview of Hexagonal Architecture (HA) without losing focus on our experiences with it. The broadest layering of HA consists of its three tiers:

- Domain with core logic in Entities, Value Objects, and Domain Services

- Application with Use Cases and Ports

- Infrastructure with Adapters

At the center of each hexagon is the “Entity”: business objects that represent a customer’s assets. Think of classic examples like ATMs, cars, etc. In our project, these are “files,” “documents,” and “attachments.” Entities are accessed through Use Cases. The logic contained therein can manipulate, link, delete entities, and more. More complex Use Cases can also be elegantly composed from multiple Use Cases. In a Use Case composition, a first business process fully executes its core task, for example finalizing files after their retention period expires. This process then triggers another, functionally independent Use Case, such as notifying the responsible employees, without needing to know its technical implementation.

Use Cases are represented externally by Ports, which serve as the interface to the “outside world” for the Use Cases. When an adapter wants to access a Use Case, it does so through the corresponding Input Port. In the case of Java, this is an interface implemented by the Use Case. When a Use Case needs to access a value in a database, for example, it does so through an Output Port, behind which a database adapter is hidden. This ensures that the application layer, where the Use Cases reside, is separated from the outer adapter or framework layer.

While implementation within the application and domain layers was largely technology-agnostic (apart from the programming language itself, of course), the adapter layer addresses specific technologies. Examples: Should the application be accessible via an API? Then a RESTful API adapter is created. Should the application access an Oracle database? Then an Oracle DB adapter implements an Output Port. This design ensures that specific technologies (such as concrete databases) never creep into the core of the application but remain an implementation detail that can be swapped out quickly if needed.

And That in a Monolith?

When you read what HA aims to achieve and how it goes about it, micro- or mini-services immediately come to mind. The division into independent hexagons lends itself naturally to being deployed as individual, small applications. Why didn’t we do that? On one hand, the answer is trivial: neither did the client request it, nor had it been implemented in the organization before. On the other hand: the number of users, the application’s load, and the expected scaling in the future make the additional layer of microservices unnecessarily complex. So why did we choose HA anyway? Because after analyzing the business logic, it quickly became clear that there would be two very well-separable areas in the application. On one hand, the core logic: things every archive must be able to do, such as legal requirements for documentation, ensuring deletion deadlines, and similar obligations. On the other hand, there is highly specialized logic that applies only to one specific archive. We wanted to strictly separate these to ensure easy integration of future archives and simple removal of obsolete ones.

How Did We Implement It?

I’d like to describe the tech stack we worked with: Our application was and is a Java backend application. It is a Spring Boot application connected to an Oracle database. As part of the application redesign, we incorporated Liquibase into the stack to document and simplify future database changes. Our application was made accessible to other applications via a RESTful API. Since our application is archiving software, we needed access to an Alfresco system. Additionally, we integrated other RESTful APIs.

Challenges and Learnings

Beyond the actual implementation, the biggest challenge was getting all developers on board. HA is neither commonplace nor trivial, so the first difficulty was bringing everyone to the same level of knowledge. Even though HA is a concrete architecture, it still allows enough latitude for individual design principles. “Should every class representing a Use Case have a UseCase suffix, or should the name speak for itself?” “Is this already a Use Case or an operation that should be placed in the Entity?” - These are just a few of the questions that arose during development. We quickly learned that in addition to the obligatory style guides and code conventions, an architectural check was also necessary. In our project, as mentioned above, we used ArchUnit for this purpose, which keeps an eye on the most fundamental rules for us. Once these hurdles were cleared and initial examples were implemented, the pace of implementation accelerated very quickly. Questions then mostly focused on very specific edge cases. “Should a scheduler sit in the adapter layer or the Use Case layer?” is just one example. All in all, we used every question to solidify the architecture in general and to sensibly extend the rules.

Conclusion

Did the overhaul pay off from our and the users’ perspective? Absolutely! Of course, there were - as with any project - initial difficulties and growing pains. On balance, however, we succeeded in transforming an application that was considered a problem child, had been reimplemented multiple times, and was seen as a necessary evil into a maintainable and flexibly extensible system. The user feedback was also very positive - particularly because the redesign of the domain entities led to a close exchange. This conveyed the feeling that “someone is taking care of things” - a psychological aspect that I want to consciously highlight here.

Questions or suggestions? I look forward to your feedback.

Authors

Related Posts

Agent Smith - Reloaded

AI agents promise to develop software on their own and solve complex tasks, but what really lies behind the hype? We dive deep into the technology, build a real workflow step by step, and uncover the unvarnished challenges that lurk on the road to production.

Model Context Protocol: The 'USB Interface' for Chatbots and Agentic Systems

With the Model Context Protocol (MCP), an open standard is emerging for integrating AI models with external tools. This article examines the structure, applications, and benefits of MCP - and shows how developers can use it to efficiently build modern, context-aware AI systems.

Postgres in Kubernetes - Automation with the Postgres Operator

Almost every app needs a database - Postgres is one of the most popular open-source databases. In this article, we show how to run Postgres in Kubernetes automatically using the Postgres Operator.