This article was automatically translated from the German original using AI. Read original

Agent Smith - Reloaded

A game developer who isn’t one allegedly earns a million dollars. Research papers practically write themselves. AI agents, so they say, are the next revolution and will change everything. The hype is real, and the drumbeat is deafening. But what really lies behind the buzzword “AI agent”?

In this article, we dive deep into the world of agentic AI. We clarify what distinguishes a simple chatbot from a true agent, build a practical workflow step by step, and shed light on the unvarnished challenges that lurk on the road to production.

More Than Just a Chat: What Is an AI Agent?

Many of us already use tools like Perplexity Search or other advanced AI tools, behind which agentic systems often hide. But not everything labeled “agent” actually is one. A simple chat with an LLM, where one input produces one output, is not yet agentic. The real difference lies in the ability to plan purposefully, act autonomously, verify the result, and decide whether the goal has been achieved or the plan needs to be adjusted.

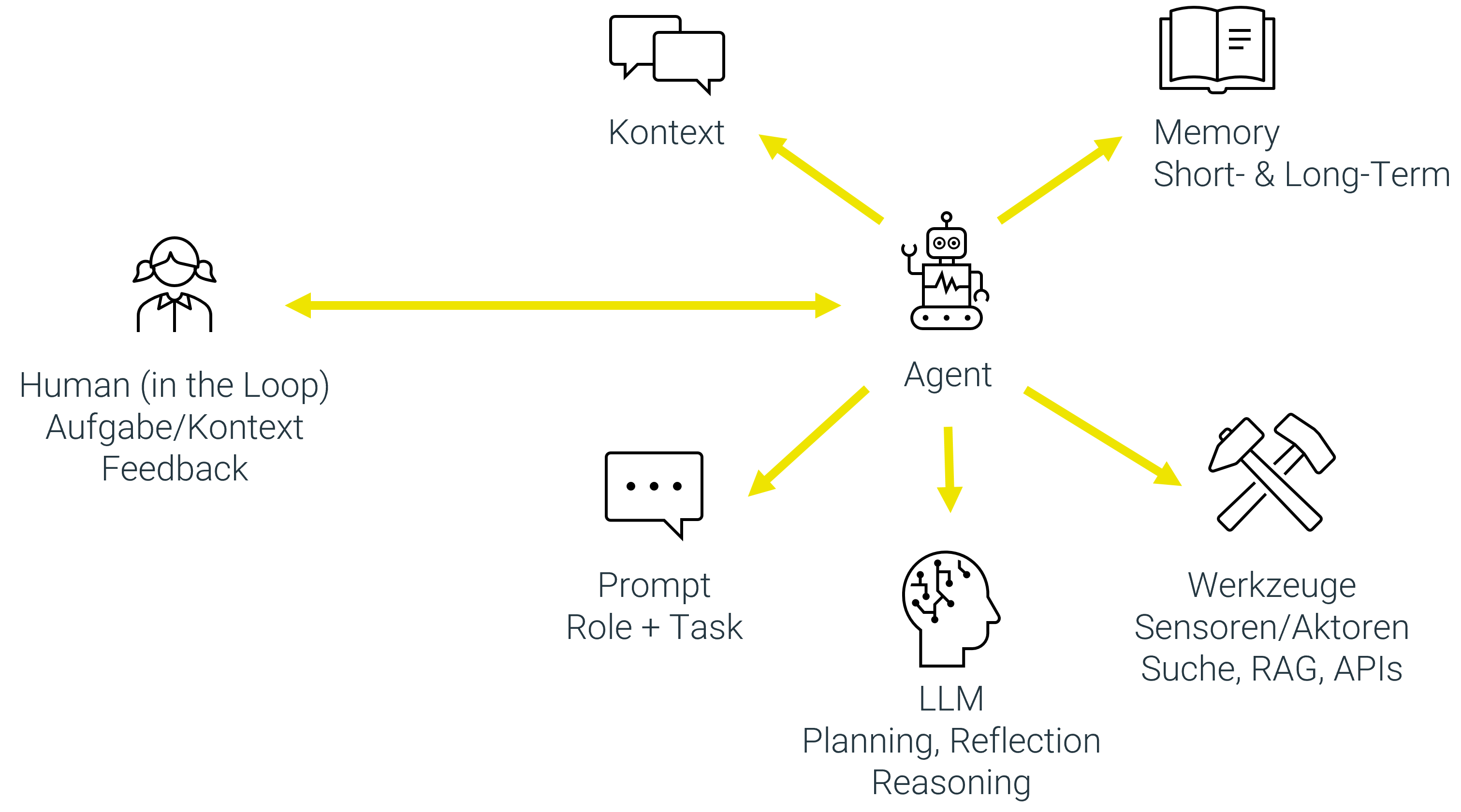

A true AI agent consists of several core components:

- A Large Language Model (LLM) as the brain for planning, reasoning, and interpreting unstructured information.

- Tools to interact with the environment. These can be external APIs (e.g., for weather data), internal systems (e.g., a customer database), a web search, or the ability to execute code in a secure environment.

- Memory to retain information. This ranges from short-term memory (the context of the current conversation) to long-term memory that stores important facts and preferences across interactions.

- Knowledge for targeted lookups helps eliminate ambiguities by replacing technical terms with the correct definition or finding documentation in a targeted manner.

Additionally, the agent is provided with the following information:

- A prompt that defines its role and fundamental approach.

- Context with information that helps the agent achieve its goal. Examples include dynamic information about the user, current date/time, details from the task environment, or pointers to best practices, reference architectures, and processes that should be considered during solution finding or planning.

- A clear goal that it should pursue and the constraints that need to be considered. This is typically defined by a human.

The crucial difference between simple chatbots and true agents lies in the following properties that make a system truly agentic:

- Autonomy: The agent can make decisions independently and adjust its plan without waiting for human instructions at every step.

- Environment awareness: It can observe its environment (e.g., read the output of a tool or analyze the content of a webpage) and react to changes.

- Agency: It can actively take action to achieve its goals, not just passively respond.

- Goal orientation: All actions are directed toward an overarching, often complex goal, such as “Organize a business trip to Berlin considering my calendar and budget.”

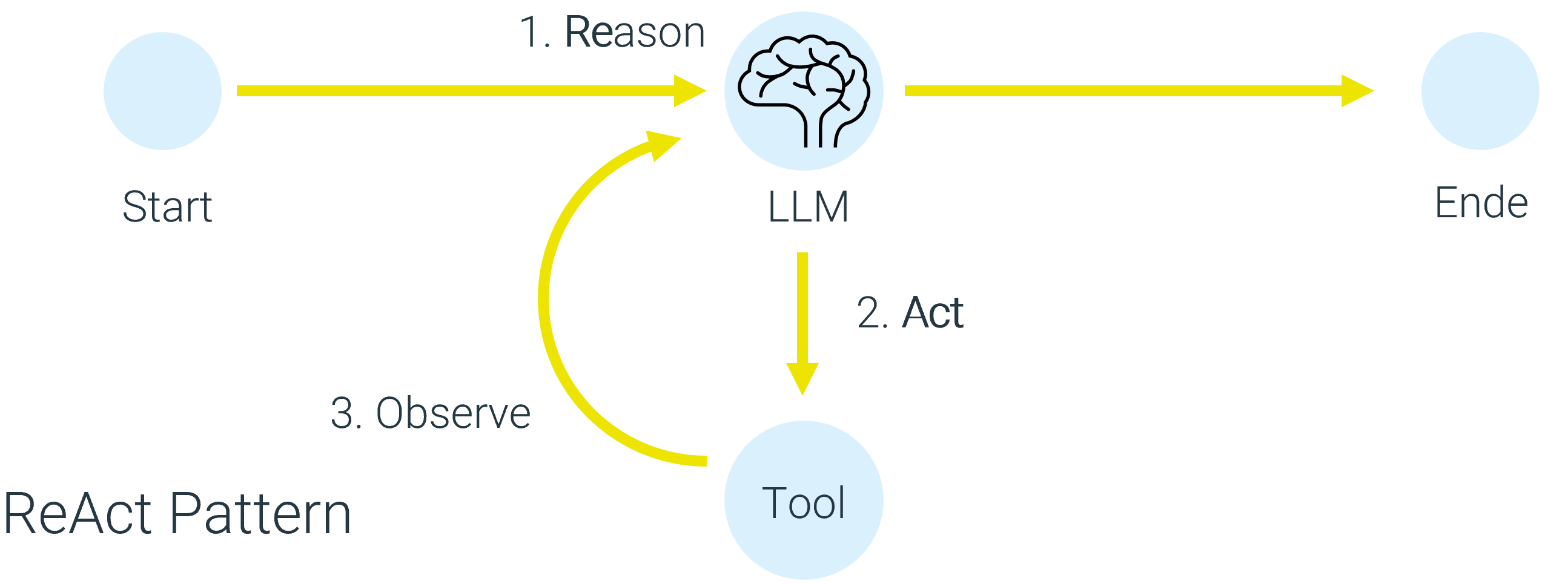

This leads to the central pattern of agentic systems: the ReAct loop (Reason + Act).

- Reason (Think): The agent analyzes the goal and its current situation and creates a plan. “I need to book a trip. First I check the calendar, then I search for flights, then a hotel.”

- Act (Take action): It executes the first step of its plan by using a tool. It calls the calendar tool to find available dates.

- Observe: It evaluates the result of its action. “The calendar shows that the second week of June is free. That’s my new information.”

- Repeat: Has the goal been achieved? No. So the cycle starts over. The agent adjusts its plan (“Okay, now I’ll search for flights for the second week of June”) and executes the next step.

This creates a spectrum: While we have full control with a simple chat or a rigid AI workflow, with an agentic workflow we trade control for autonomy. We don’t know at the start how many steps the agent will need or exactly which path it will take.

Teamwork: The Power of Multi-Agent Systems

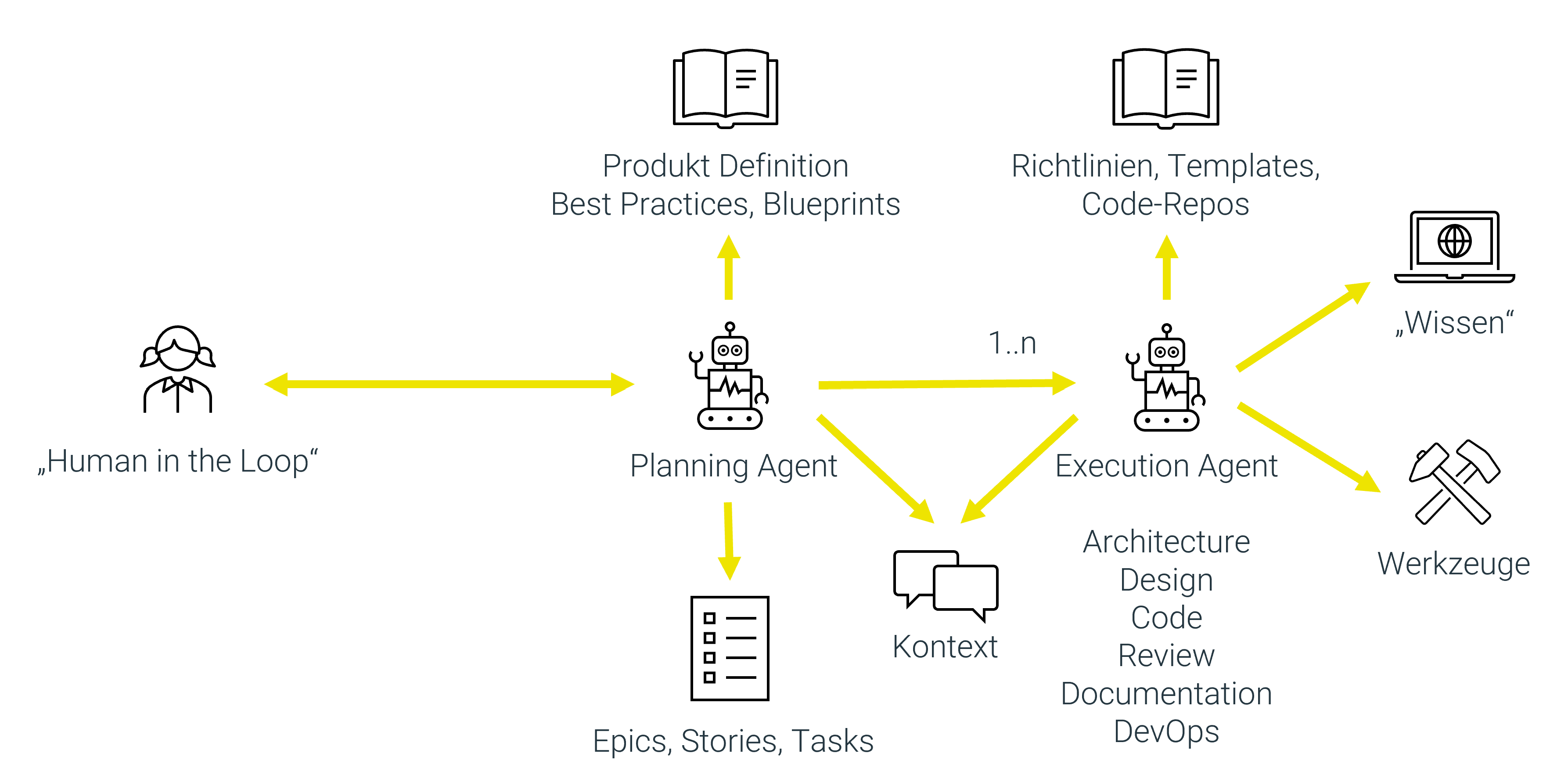

Complex tasks often require specialists. The same principle applies to AI. In a multi-agent system, multiple agents specialized in different tasks work together, similar to an agile team:

- A Planning Agent breaks down the main task into smaller, manageable steps.

- Specialized agents take on tasks such as architecture, coding, review, or documentation. Each of these agents has its own system prompt and its own tools optimized for the task.

- They share a common context and artifacts (e.g., code files in a shared directory) to work toward a common goal.

The collaboration can be organized in different ways:

- Hierarchically: A supervisor agent distributes tasks and collects the results. This enables more control and a clearly defined process.

- As a group chat: The agents communicate freely with each other and dynamically decide who takes on the next task. This offers more autonomy and flexibility but can also be more chaotic.

An impressive real-world example is the Google Co-Scientist. This multi-agent system analyzes scientific studies, generates new research ideas, and evaluates them based on novelty and likelihood of success. The result is a prioritized list of ideas for human scientists — a perfect example of successful human-machine collaboration.

From Practice: An Agent That Writes Test Cases for User Stories

Theory is fine, but how do you build something like this? Let’s look at a concrete use case: automatic test case generation from user stories.

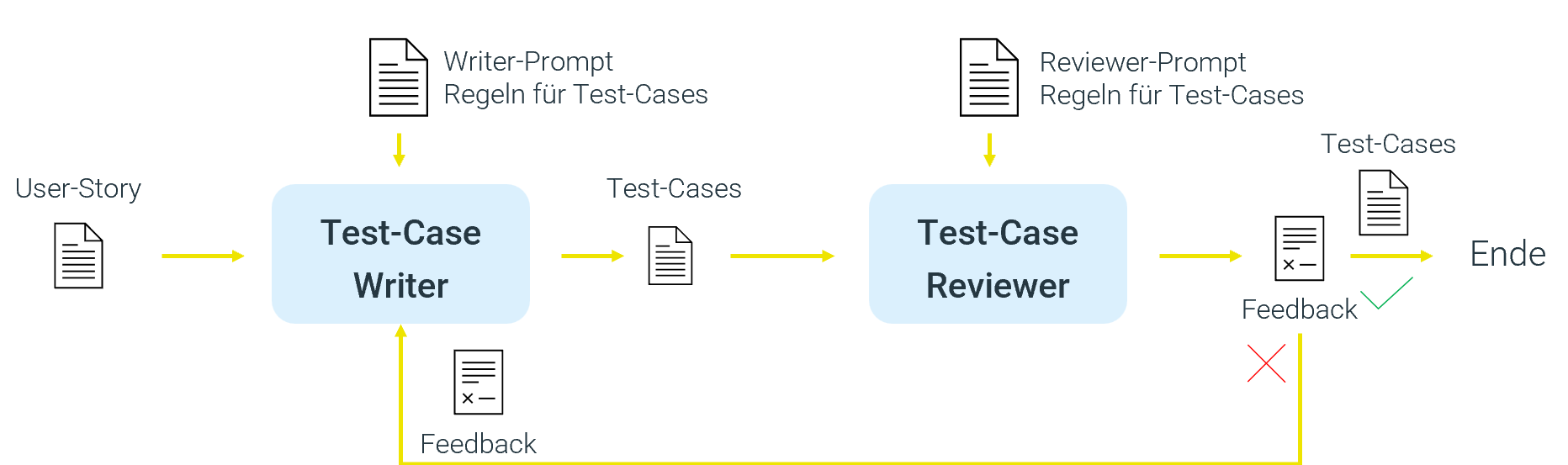

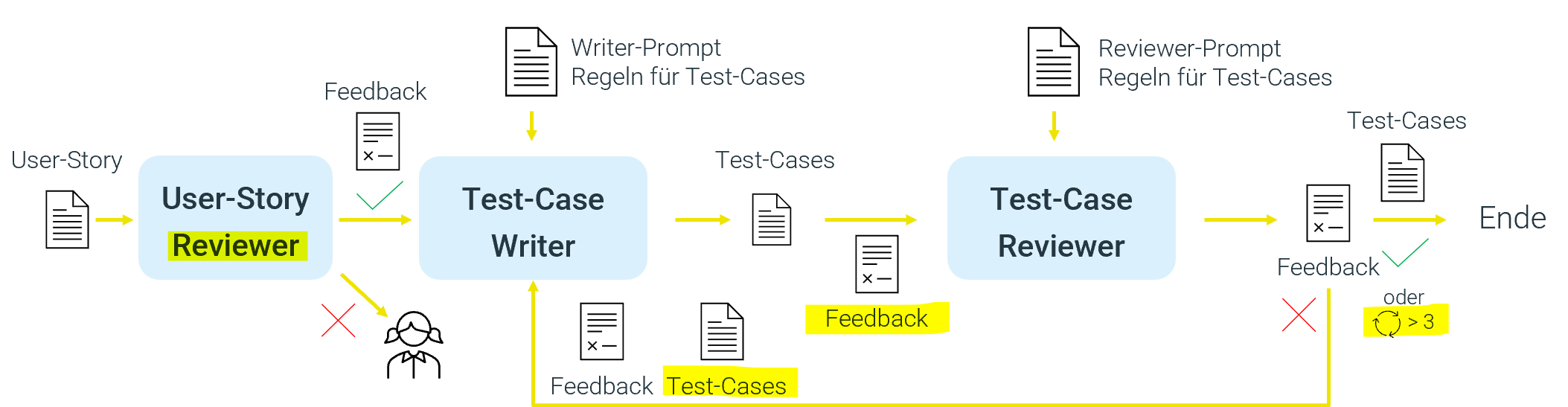

Our workflow receives a user story as input. The “Test Case Writer” agent creates test cases based on predefined rules. A “Test Case Reviewer” agent checks the test cases against defined criteria and decides whether they are acceptable or not. Its feedback goes back to the Writer, who improves the test cases. This loop can repeat multiple times until the test cases meet the quality requirements.

This is a good start, but not yet truly perfect and robust.

Points of criticism:

- Garbage in, garbage out: If the user story is poorly written, the Writer produces unusable test cases. There is no quality control of the input.

- Uncontrolled iteration: The Writer and Reviewer could theoretically send things back and forth endlessly, leading to exploding costs and uncontrollable behavior.

- Lack of context: Both the Writer and Reviewer lack the full context of previous iterations, making it harder to converge on a good result.

We refine the process:

- Quality gate: A User Story Reviewer checks the incoming story. Only high-quality stories are forwarded. Poor stories are sent back to the author with feedback.

- Test case creation: A Test Case Writer takes the validated story and creates the appropriate test cases based on rules.

- Review loop: A Test Case Reviewer checks the created test cases. If they are incomplete or incorrect, it sends them back to the Writer with specific feedback.

- Iteration: The Writer receives the story, its original test cases, and the feedback. With this context, it can now create an improved version. This loop can repeat multiple times, but only up to a maximum number of iterations (e.g., five attempts).

- Context engineering: The Writer should receive not only the test cases as feedback, but also the original story, its previous version of the test cases together with the feedback, in order to improve its result in a targeted manner. The Reviewer should also see all artifacts to iteratively improve.

Important lessons from this example:

- Context is everything: The agent must receive exactly the information it needs for the next step — no more and no less. This is why the term “context engineering” has become established, describing the careful curation and structuring of input for each agent.

- Termination conditions are mandatory: An agent loop can theoretically run forever if, for example, the Reviewer is too critical or the Writer is too poor. A maximum number of iterations (e.g., five attempts) is essential to avoid infinite loops and exploding costs.

- Structured data helps: Instead of having the agent output plain text, you should instruct it to generate structured data (e.g., JSON). This makes the results machine-readable and the workflow controllable.

The Unvarnished Truth: Challenges and Pitfalls

Despite the potential, the path to a production-ready agent is rocky. The biggest hurdles are:

- Non-determinism: LLMs are inherently non-deterministic. The same input can lead to slightly different results. This makes testing and ensuring consistent quality extremely difficult.

- The “black box”: For the user, it is often unclear what an agent is doing, why it made a particular decision, and how much longer it will take. There is a lack of transparency, which undermines trust. Good visualizations and status updates are crucial here.

- Quality of tools: An agentic workflow is only as good as its weakest component. A web scraping tool that delivers messy data or a poorly documented API inevitably lead to poor results.

- Cost and efficiency: The most capable LLMs are expensive and slow. For each task, the right trade-off between cost, speed, and quality must be found. Not every task requires GPT-5 “extreme reasoning.”

- Appropriate use: Agents are not the solution to every problem. Often, a “boring” predefined workflow with suitable AI components for individual tasks and human review and oversight is the more robust and reliable solution.

Hype vs. Reality: The State of Agents

The initial hype around AI agents as the “Next Big Thing” is beginning to fade, and critical voices are growing — coming precisely from those developing at the forefront.

Andrej Karpathy, former OpenAI scientist, sums up the growing disappointment: “Agents simply don’t work.” He says today’s agents lack fundamental capabilities: they are neither intelligent nor multimodal enough, cannot reliably control end devices, and are cognitively disappointing. A core problem is the lack of continuous learning; you cannot tell them something they will remember later.

Although Karpathy considers these problems solvable, they require massive effort. Based on his 15 years of experience in AI research, he predicts that it will take “at least a decade” before we see truly versatile AI agents. Currently, he concedes, agents only work smoothly in narrowly defined niches, such as simple copy-paste tasks in coding. But that is far from a true agent that must master all tasks well — and not just text-based ones like code, but also visual ones like creating presentations.

Conclusion: Agents Are Here to Stay and Genuinely Useful — If We Deploy Them Correctly

Even though AI agents are still in their early stages and research still needs to work on the reliability of LLMs in the medium term, they represent a fundamentally new way to automate complex, multi-step processes. Short-term success, however, lies not in blind trust in the autonomy of generic multi-agent systems, but in thoughtful system design and targeted deployment.

The key takeaways are:

- Weigh control vs. autonomy: Not every process needs to be fully autonomous. Often, well-defined workflows with AI augmentation are the more robust and reliable solution.

- Quality starts with the data: “Garbage in, garbage out” applies here more than ever. High-quality, clean input data is half the battle.

- Humans stay in the loop: For critical processes, human review is often essential to prevent far-reaching consequences from erroneous decisions and to maintain control.

- Complexity lies in the details: Writing the agent code is often the easiest part. The real challenge lies in careful prompt and context engineering, designing reliable tools, and — if required for the use case — coordinating the agents.

Despite all the justified criticism: we often overestimate what is possible in the short term but underestimate the long-term transformation. AI agents will not replace all developers overnight, but they will, much like personal assistants, increasingly be integrated into our daily lives and workflows. They will fundamentally change the way we develop software and interact with digital systems. The future is agentic, and it is time to prepare for it.

Authors

Related Posts

Agent Smith - will you take over?

What impact will AI have on the work of agile teams in software development? Will we be supplemented by tools or will Agent Smith take over? In the following article, we strive to get an overview of current developments and trends in AI and how agent-based workflows could change the way we work.

Model Context Protocol: The 'USB Interface' for Chatbots and Agentic Systems

With the Model Context Protocol (MCP), an open standard is emerging for integrating AI models with external tools. This article examines the structure, applications, and benefits of MCP - and shows how developers can use it to efficiently build modern, context-aware AI systems.

Hexagonal Architecture in Monoliths? Why Not!

Hexagonal architecture in a monolith? Sounds unusual - but it turned out to be a great success for our archiving tool. A field report on challenges, learnings, and the tangible benefits for developers, users, and long-term maintainability.