LangSmith: Debug, Trace, Evaluate and Monitor LangChain-powered LLM-Apps

After developing with LangChain for a while, we have come to appreciate the power of the LangChain Framework. However, the powerful abstractions of the framework also have their pitfalls, especially when it comes to debugging and understanding of the execution of the LangChain inner workings and “magic”.

In this post we want to introduce LangSmith, a platform developed by Harrison Chase, the creator of the LangChain Framework. LangSmith offers a comprehensive solution for developing, debugging, tracing, testing, evaluating, and continuously monitoring LangChain-powered applications in production environments.

Sounds interesting? Then let’s take a closer look.

LangSmith Overview

At first, one is currently still a bit confused, as there are several names for the service: LangSmith, LangChain Plus Platform, LangChain Hub… We would like to give an overview according to our current understanding, always with the note, that the names are still subject to change, as LangSmith currently is in private beta.

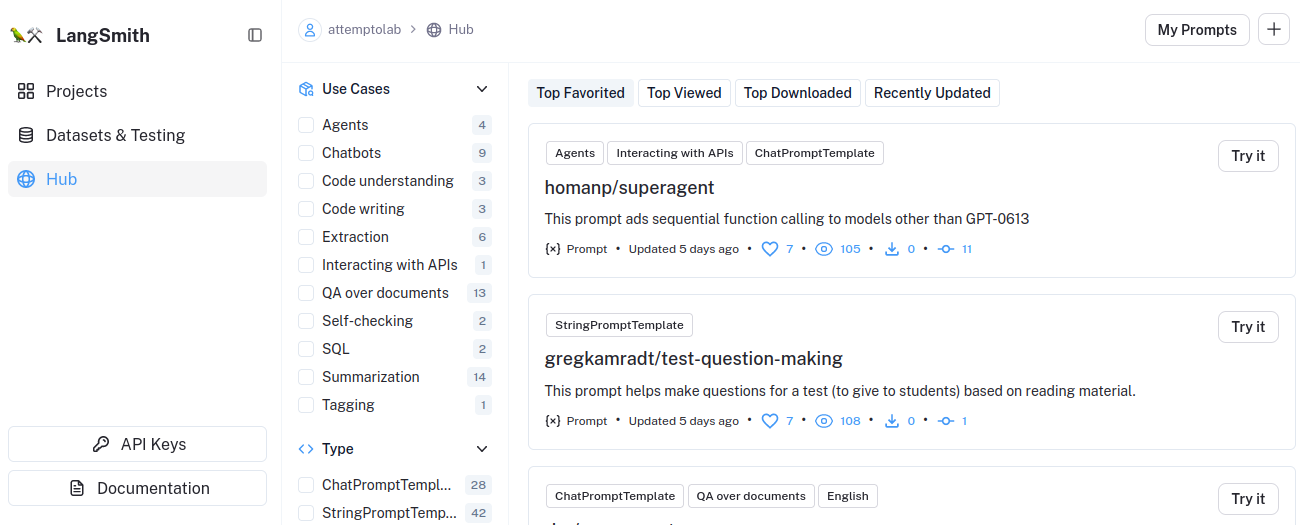

LangSmithis the name of the platform supporting the development, testing and operation of LLM-based applications.LangChain Hubis a directory for prompts that can be searched by criteria and dynamically loaded by LangChainLangChain Plus Platformis still occasionally used synonymously with LangSmith, - currently “https://www.langchain.plus/” redirects to “https://smith.langchain.com/”, so we guess thatLangSmithwill be the final name for the platform

It is not our goal to give a complete introduction to the LangSmith Platform with all its use cases in this short article, rather we want to make you aware of the tool and give you a brief outline of its capabilities.

LangSmith

Now that we have a rough idea of what LangSmith is, let’s take a look at what it can do for us. You can find a detailed description of the use cases in the LangSmith documentation.

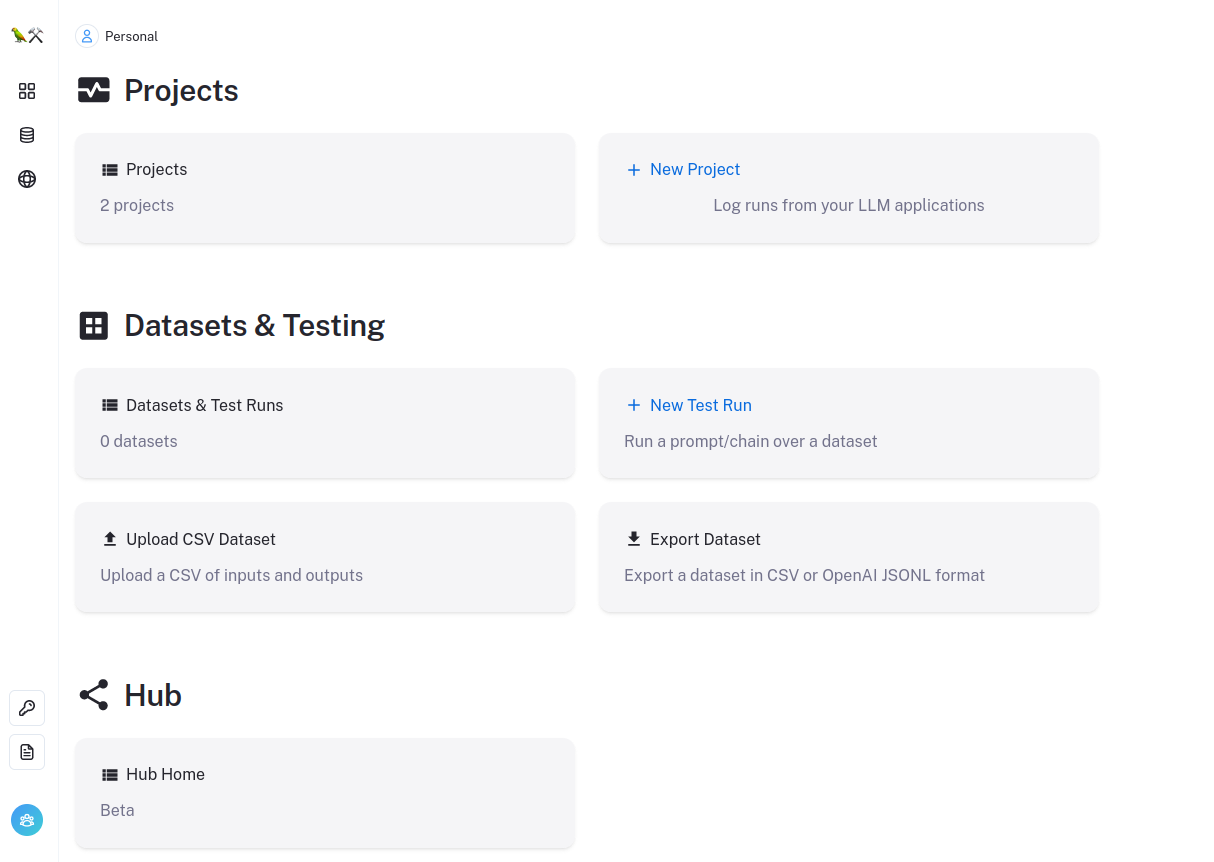

On the LangSmith Dashboard you see the main Elements of the platform:

Projectsfor Logging, Tracing and MonitoringDatasets and Testingfor Development and EvaluationsHubfor Collaboration on and Sharing of Prompts

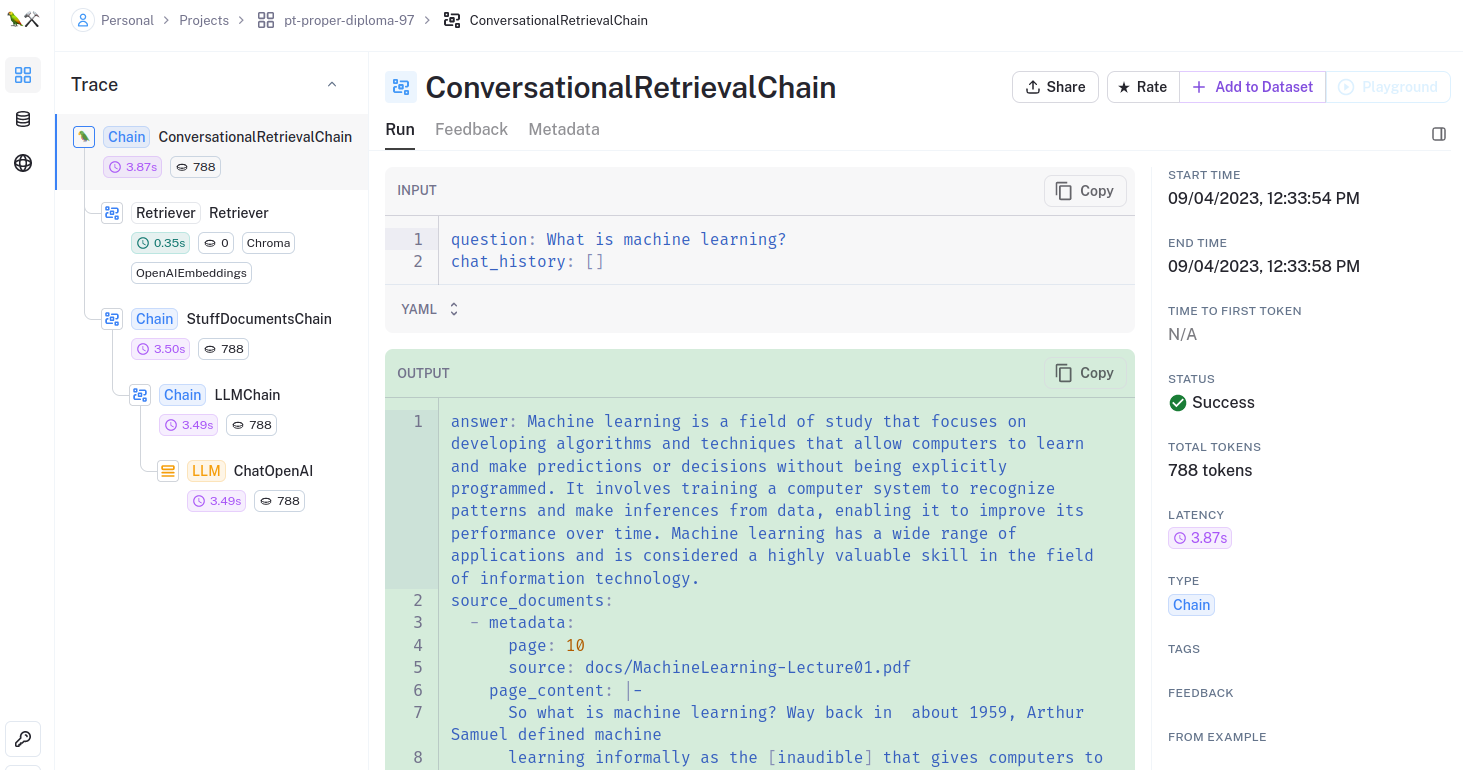

Tracing & Debugging

LangSmith, at its core, serves as a vital tool for tracing the intricate web of interactions within your LangChain application. By meticulously recording LLM requests and responses, along with the internal processing steps, it empowers you with a granular view of your application’s execution. This real-time insight allows you to comprehensively analyze each step in the LangChain process, offering an unparalleled understanding of its inner workings. Whether you seek to unravel the intricacies of LangChain or pinpoint and rectify elusive bugs in your application, LangSmith equips you for the task.

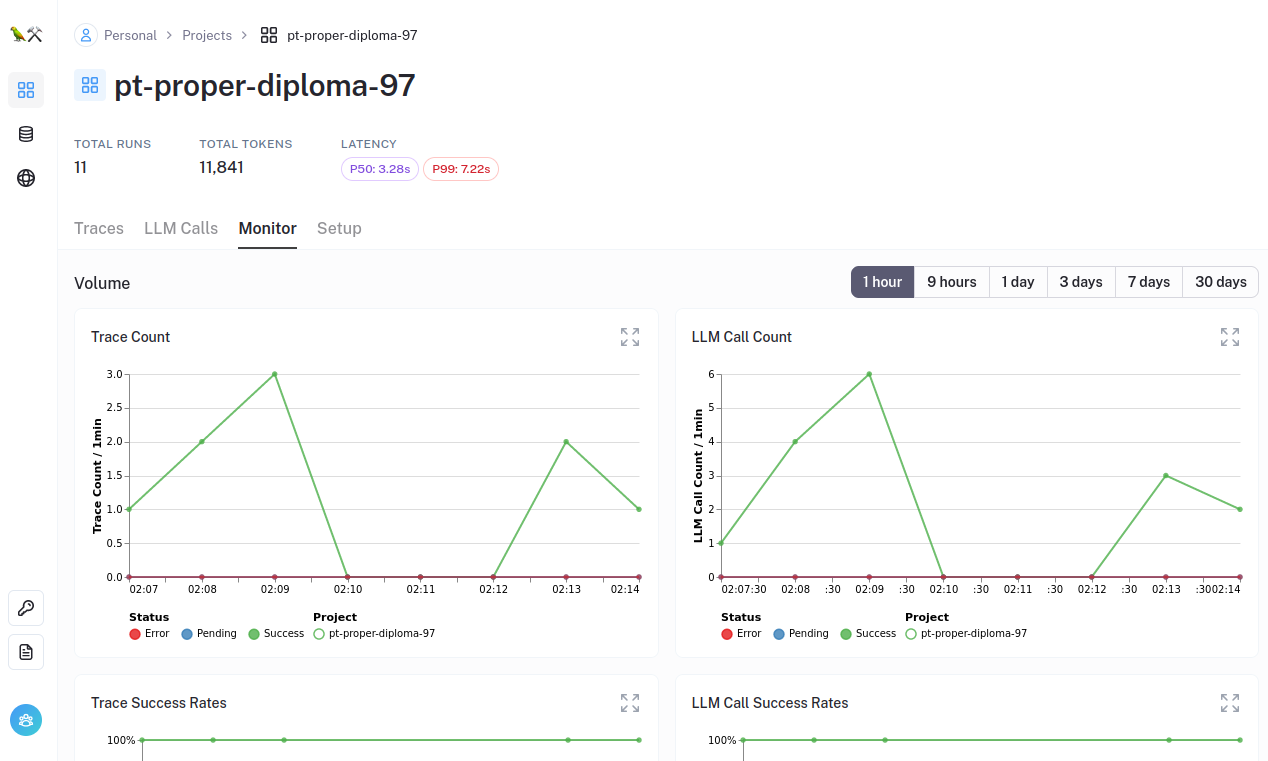

Monitoring & Analysis

LangSmith offers dynamic monitoring and continuous analysis of LLM applications. This ongoing vigilance allows you to track your application’s performance over time, especially crucial as LLM models evolve. By charting the evolution of LLM models and their impact on results, LangSmith ensures that you remain agile and responsive to subtle shifts in your application’s performance in a production environment.

Additionally, LangSmith enables you to distill valuable insights by summarizing the usage patterns of your application.

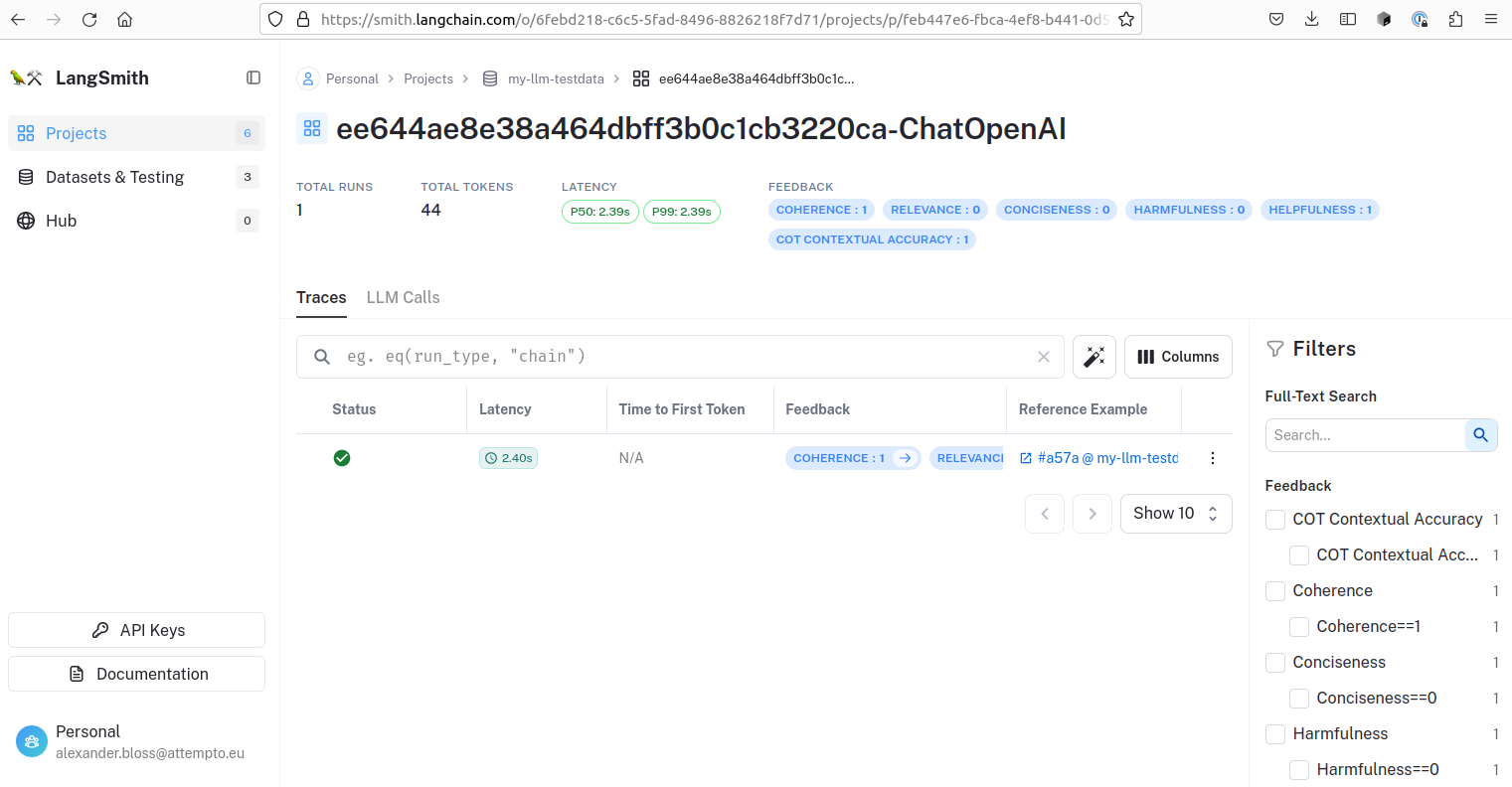

Testing & Evaluation

Furthermore, LangSmith opens up an exciting realm of possibilities in testing and evaluating LLM applications. Referencing the comprehensive LangSmith documentation, you can explore its multifaceted capabilities. Harnessing prebuilt evaluators, you can conduct a wide range of tests on the generated responses for your test-prompts, including correctness, conciseness, relevance, coherence, harmfulness, maliciousness, helpfulness, controversiality, misogyny, criminality, insensitivity… and you can build your own evaluators. You can use simple prompt/response test data, more complex chat-like conversations with user, system, and function messages to provide exact test cases for your application. Therefore, it empowers you to conduct rigorous comparisons between your application’s outcomes and a predefined gold standard, facilitating meticulous evaluation.

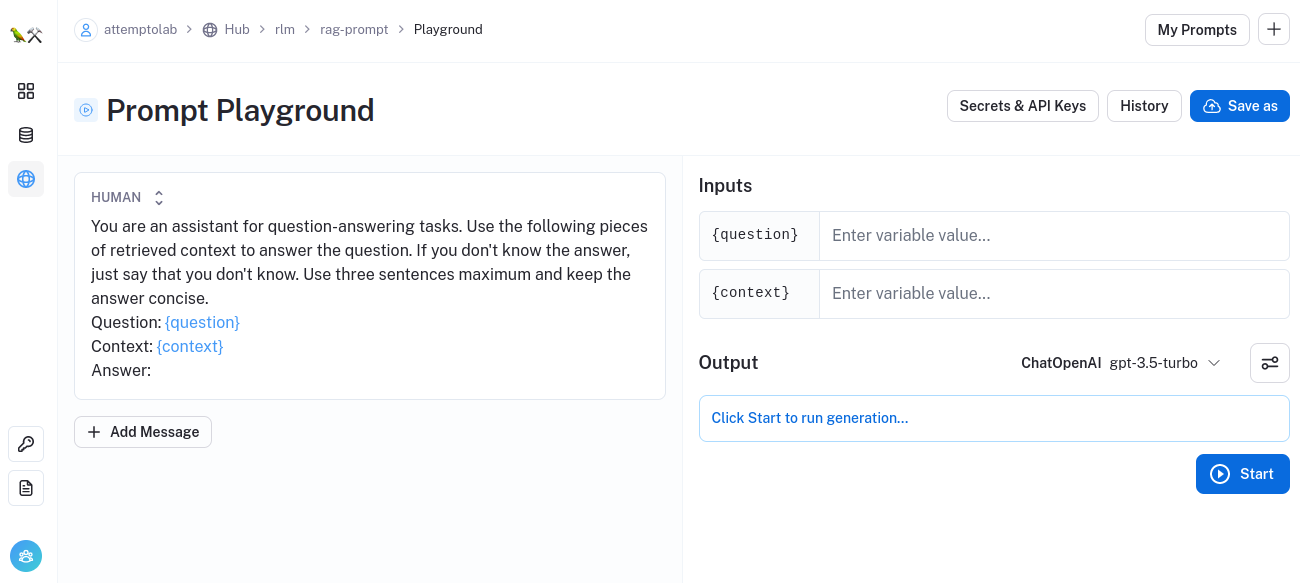

Collaboration & Playground

In tandem with these features, LangSmith serves as an invaluable collaborative platform through LangChain Hub. Here, you can seamlessly discover, share, and version control prompts, which can be dynamically loaded at runtime within LangChain. This collaborative synergy allows you to learn from the community and leverage their expertise, fostering an environment of shared knowledge and innovation. With LangChain Hub, you have the opportunity to tap into the collective wisdom of fellow developers, researchers and enthusiasts, gaining valuable insights and best practices to enhance your LangChain application.

If you have found a interesting prompt, you can try it out using the LangSmith Playground using your OpenAI API-Key.

So now that we know what LangSmith is and what it can do, let’s take a look at how to use it.

Get Started

In order to use LangSmith for your own experiments, you need to create an account at LangSmith and generate an API-Key from your account settings. Then you can use this API-Key to set your environment variables accordingly.

export LANGCHAIN_TRACING_V2=true

export LANGCHAIN_ENDPOINT="https://api.smith.langchain.com"

export LANGCHAIN_API_KEY="<your-api-key>"

export LANGCHAIN_PROJECT="<your-project>"

# lauch your LangChain python application

python myLanChainApp.pyHowever, a challenge arises: as of the current writing, LangSmith remains within a private beta phase, necessitating an invitation code for platform access. Fortunately, we have a solution readily available for you — continue reading to discover it.

How to get access to the private beta

If you wonder how to get access to the LangSmith Platform during the private beta, we have good news for you: just enroll in the free course LangChain for LLM Application Development at DeepLearning.ai thought by Harrison Chase and open the JupyterNotebook in the chapter “Evaluation”. There you’ll find the cell “LangChain evaluation platform” which contains an invitation code for you, so that you can use the platform immediately.

Conclusion

LangSmith is a powerful tool to debug, trace, evaluate and monitor your LangChain powered LLM-Apps. Time will tell how the platform will evolve and if Harrision Chase’s vision of a collaborative platform for LLM-Apps will come true, but we are confident that at least the tracing and monitoring capabilities will be a great help for developers and researchers. If you are interested in LangChain and LLMs, you should definitely take a look at LangSmith.

Autoren

Ähnliche Beiträge

Enhancing Your Document-ChatBot: Improving Retrieval-Augmented Generation for Superior Information Synthesis

Unlock advanced RAG capabilities using document hierarchies. Our guide explains how structuring data like a table of contents can significantly improve information retrieval, ensuring faster, more reliable, and contextually accurate AI-generated content with reduced errors.

Interactive Web-App with Streamlit: Web-UI for Chat-with-your-Documents

Using our chat class from the last post we now build a web-app with Streamlit for easy chat with your documents

LangChain: Chatten Sie mit Ihren Dokumenten!

Persönliche Gespräche mit LangChain: Intelligente Chatbots entwickeln, die Ihre Dokumente verstehen – mit Retrieval-Augmented Generation (RAG)